Regression Analytics:

Explaining What Drives

the Numbers

From correlation to causal interpretation

Department of Computer Science

University of the Philippines Cebu

"Every coefficient tells a story about cause and effect."

"All models are wrong, but some are useful."

— George E.P. Box, 1976

This week: how to make regression useful for explaining and predicting in the Philippine context.

Session 1 Objectives

Regression Fundamentals

The OLS equation, coefficient interpretation, and the meaning of “holding all else constant.”

Diagnostics & Assumptions

LINE assumptions, residual plots, VIF, and when to trust your model’s output.

Philippine Application

Regional poverty model using PSA data: literacy, GDP, and urbanization as predictors.

By the end of this session you will be able to build, diagnose, and interpret a multiple regression model for any Philippine dataset.

Builds on: CMSC 173 ML + Weeks 5–6

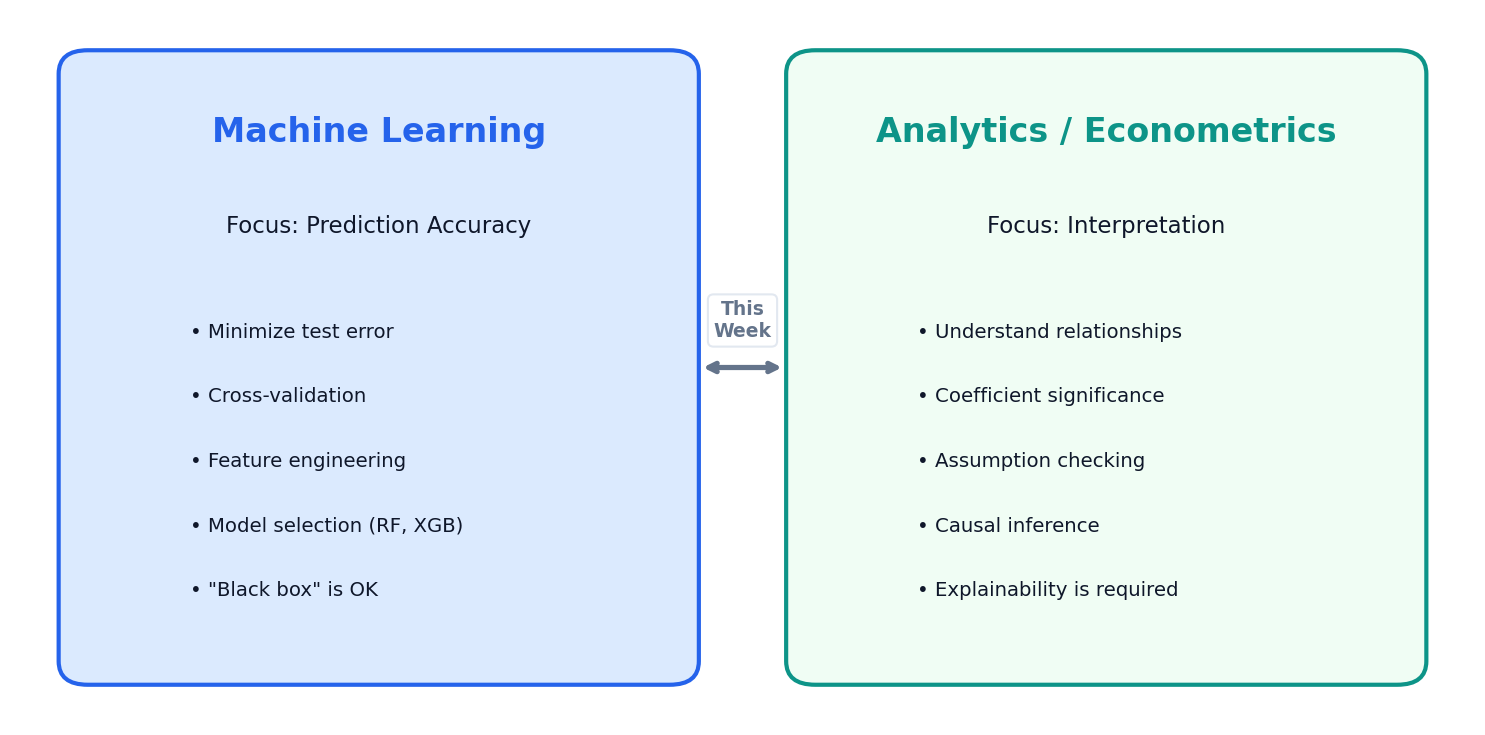

What Regression Answers

Regression in analytics is about explanation — not just prediction. The coefficients are the story.

ML Predicts; Analytics Explains

This Week’s Focus

Interpretation of coefficients, assumption checking, and communicating results to stakeholders.

From CMSC 173

You already know how regression works mechanically — now learn to read the story in the coefficients.

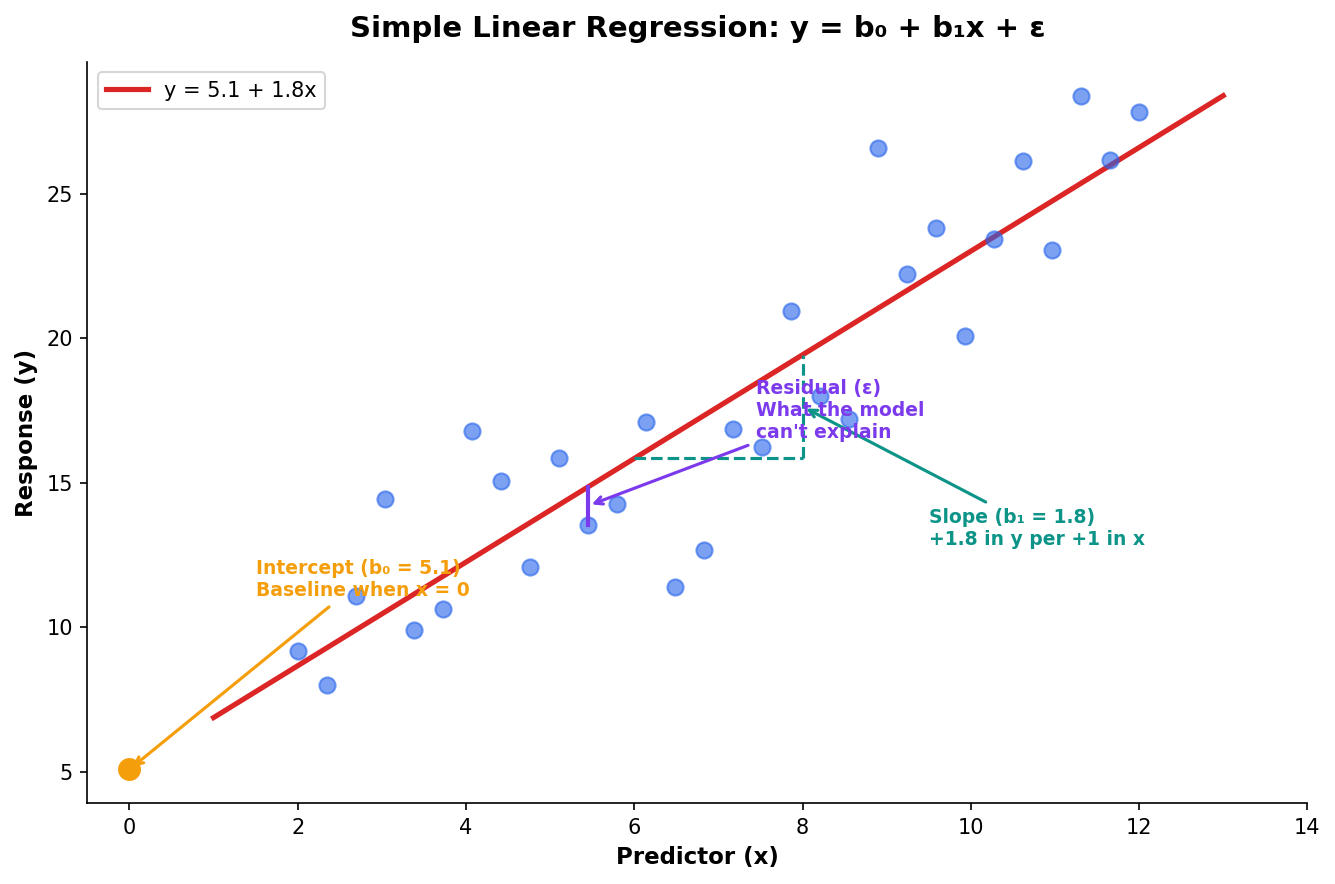

The Regression Equation Tells a Story

Every regression equation has three characters: the baseline (b₀), the effects (b₁, b₂…), and the unexplained (ε). Together they tell a story about what drives the outcome.

The Intercept (b₀)

The predicted value when all predictors are zero — often a theoretical baseline.

The Slope (b₁)

The change in y for a 1-unit increase in x, holding everything else constant.

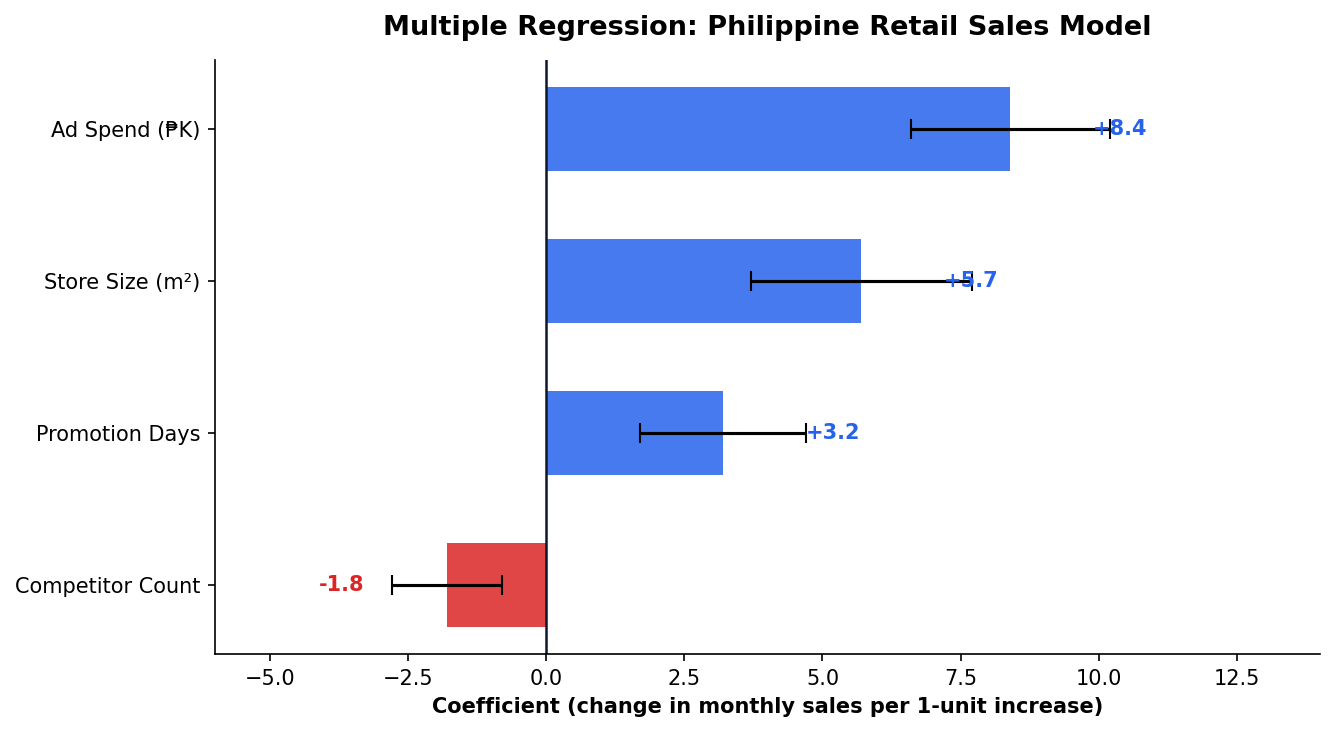

Each Coefficient Is a Ceteris Paribus Statement

How to Read This

Each bar shows how much monthly sales change per 1-unit increase in that predictor, with 95% confidence intervals.

The Key Phrase

Holding all else constant — this is what separates regression from simple correlation.

Interpreting Coefficients for Stakeholders

| Predictor | b | Translation |

|---|---|---|

| Ad Spend ₱K | +8.4 | Each additional ₱1K in ads → ₱8.4K more sales |

| Store Size m² | +5.7 | Larger stores sell more, all else equal |

| Promotion Days | +3.2 | Each promo day adds ₱3.2K |

| Competitor Count | −1.8 | Each nearby competitor costs ₱1.8K |

Translation Rule

For every 1-unit increase in X, Y changes by b₁ units — holding all other variables constant.

What Stakeholders Want

They don’t want coefficients. They want: “Should we spend more on ads or open a bigger store?” Your regression answers this.

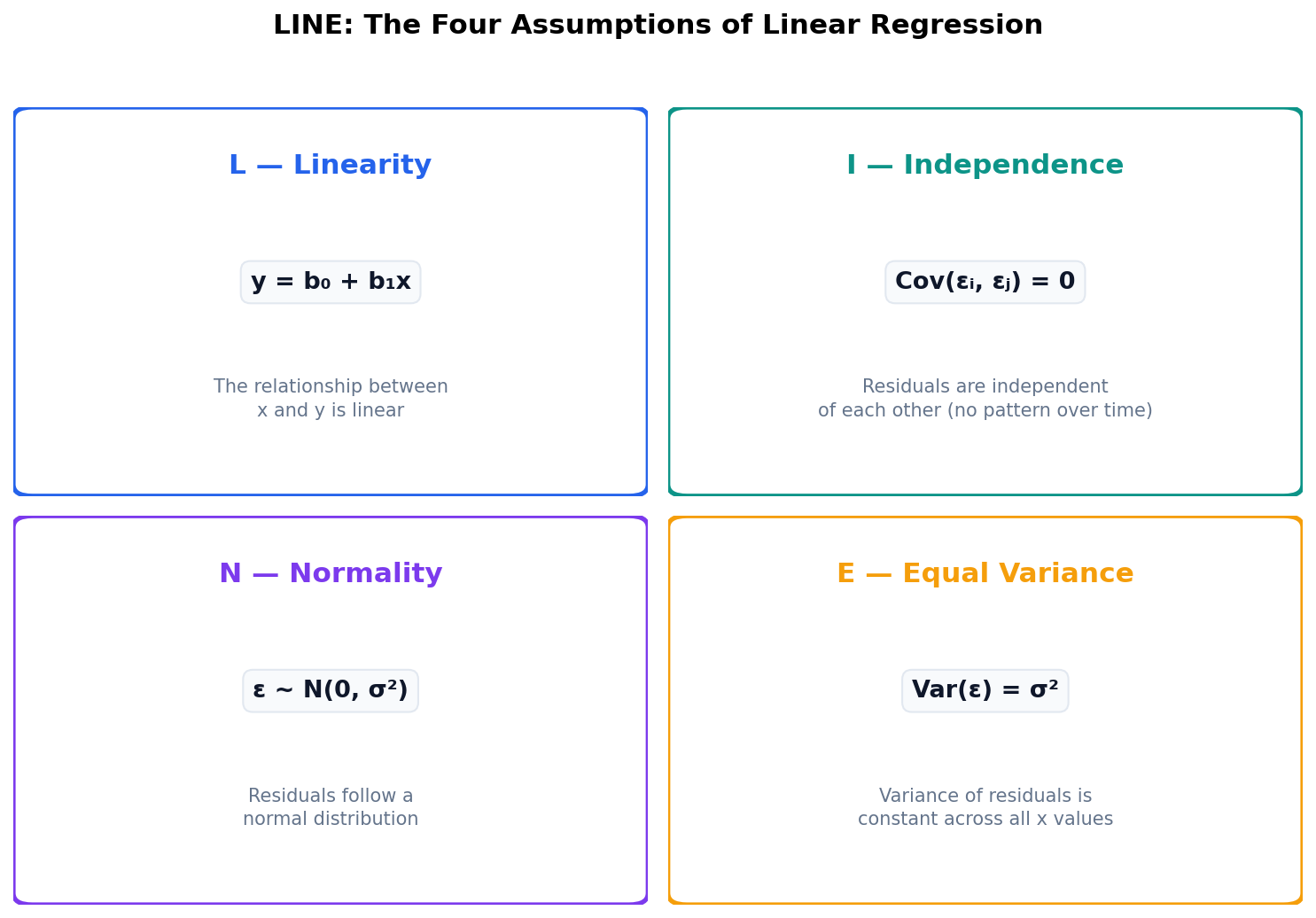

Trusting the Model

A regression is only as good as its assumptions. Visual diagnostics tell you when to trust — and when to stop.

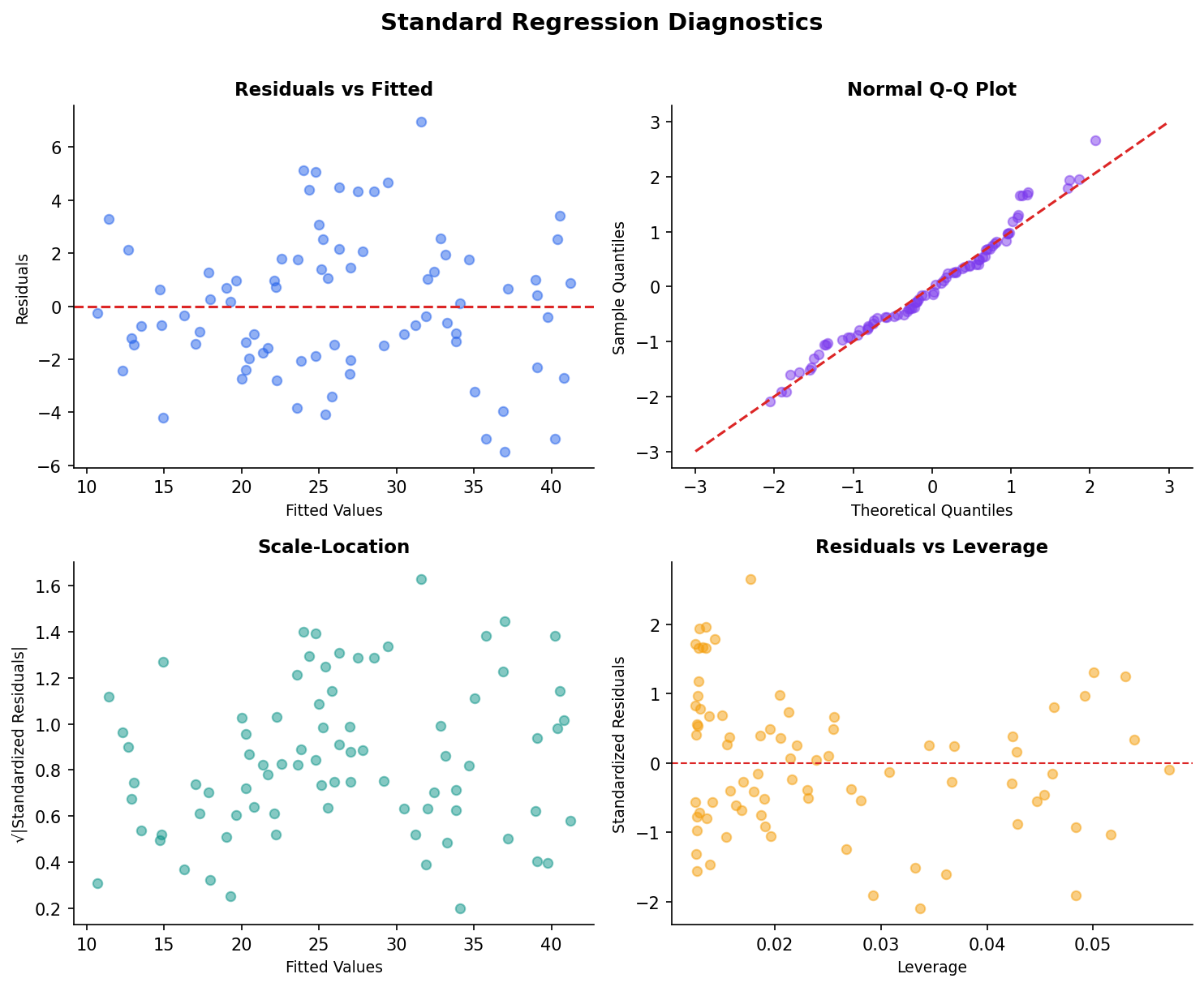

LINE: Four Assumptions You Must Check

Why It Matters

Violated assumptions → biased coefficients, wrong p-values, misleading conclusions.

The Good News

Visual diagnostics catch most violations quickly — no advanced math needed.

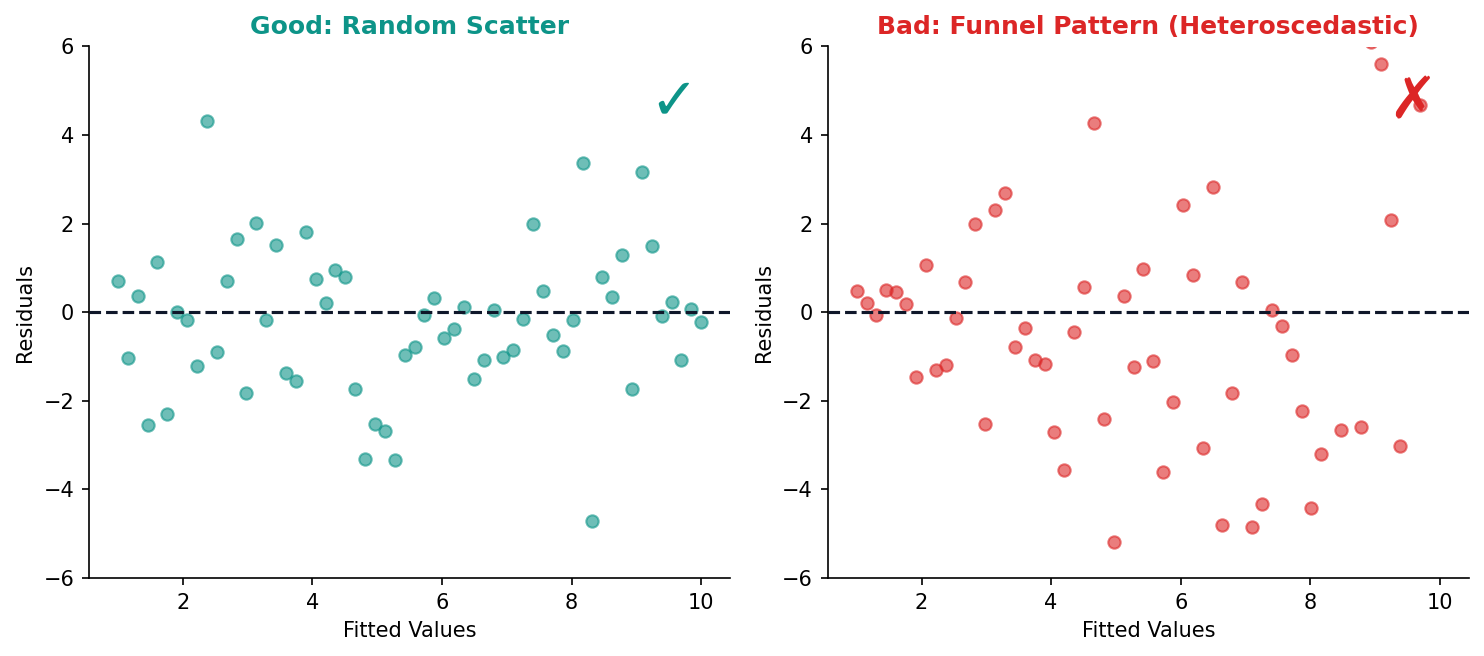

Residual Plots Reveal Hidden Problems

What to Look For

Patterns = problems. Random scatter around zero = the model is working. Any structure means the model is missing something.

Reading the Q-Q Plot

Points on the diagonal = normal residuals. Curved tails = heavy-tailed or skewed errors.

Good Residuals vs Bad Residuals

The residual plot is your first diagnostic check. Random scatter means the model’s assumptions hold. Patterns reveal specific problems.

Random scatter around zero — assumptions met.

Funnel shape — variance increases with x (heteroscedasticity). Fix: log-transform y or use robust standard errors.

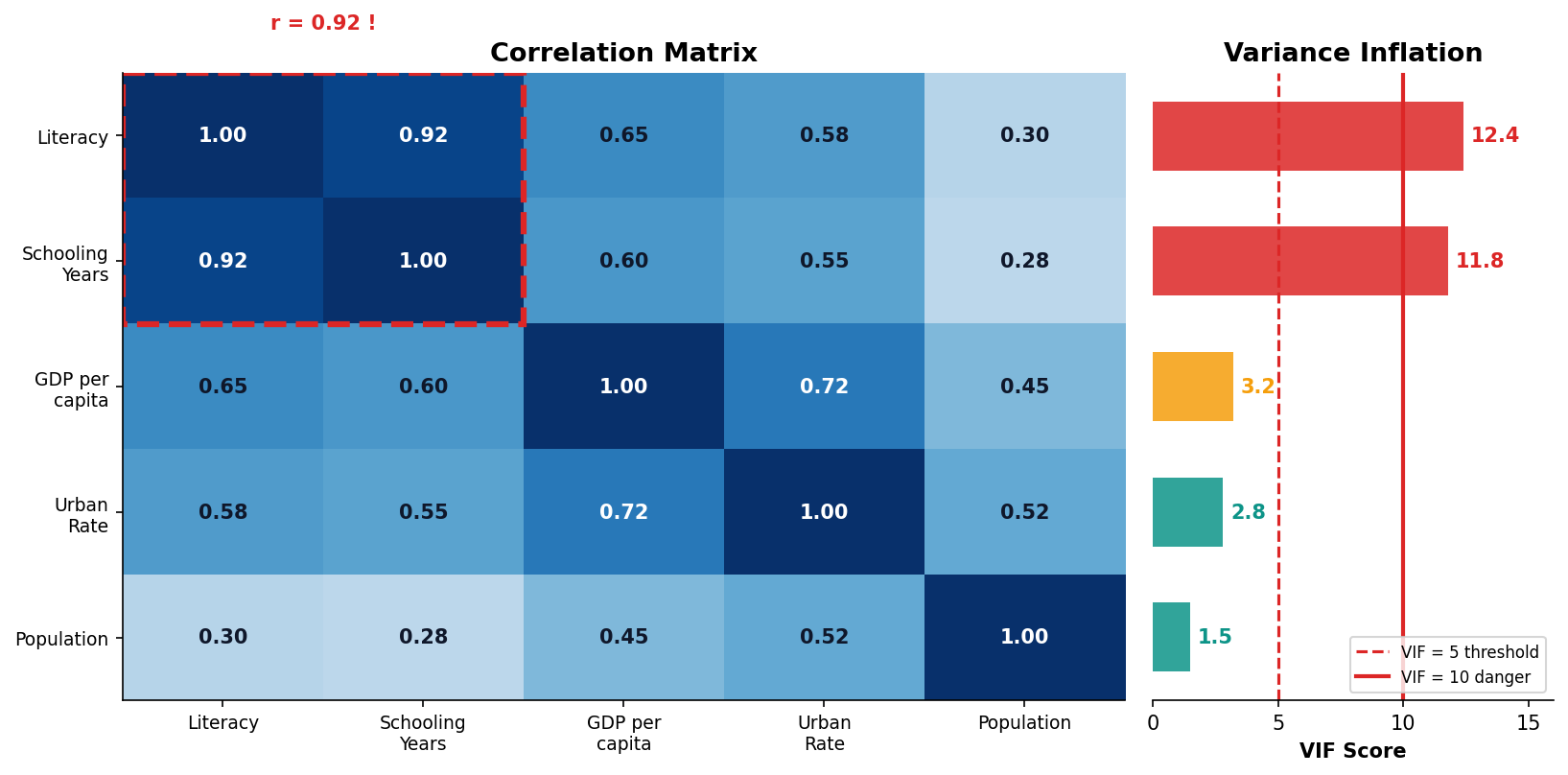

Multicollinearity Inflates Your Standard Errors

VIF Rule of Thumb

VIF > 5 → investigate. VIF > 10 → drop one of the correlated variables or combine them into a single index.

Philippine Example

Literacy rate and years of schooling have r = 0.92 (VIF > 12). Using both in the same model makes neither coefficient reliable.

Model Evaluation & Application

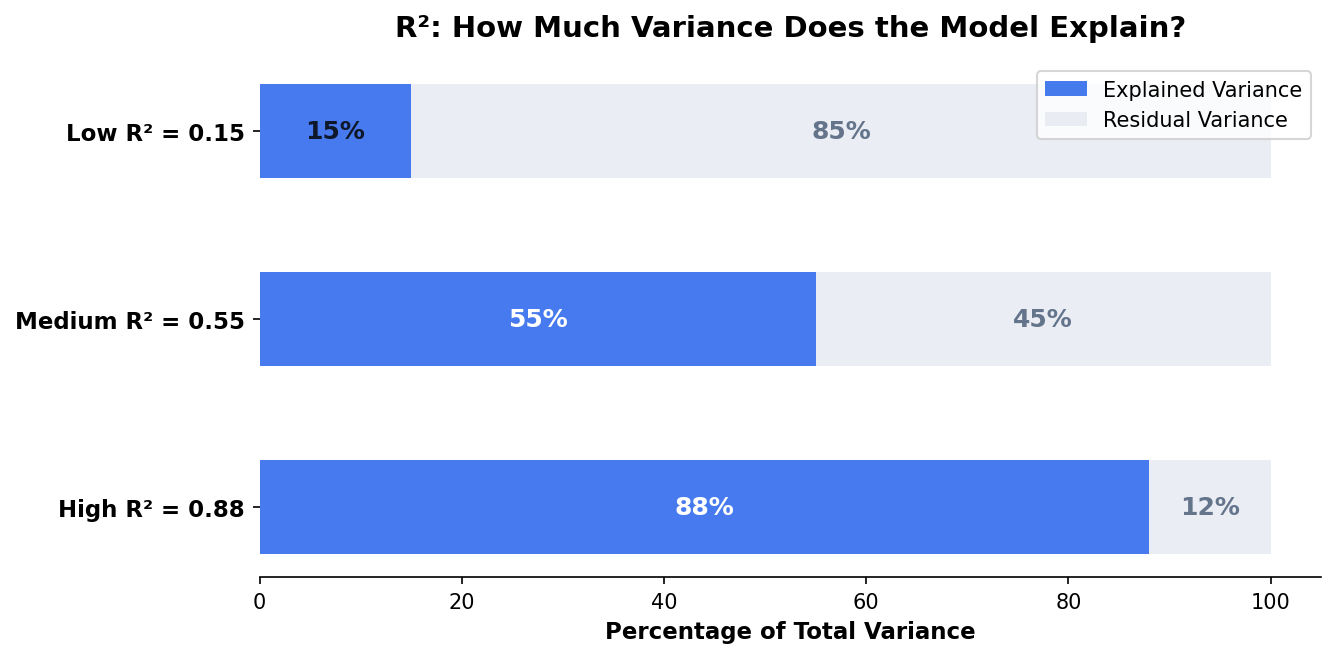

R² tells you how much you explained. RMSE tells you how far off you are. Context tells you if the model is useful.

R² Measures Explained Variance

R² Is Not Everything

R² = 0.30 may be excellent in social science where human behavior is noisy. R² = 0.95 in physics is expected. Always compare to domain baselines.

Adjusted R²

Penalizes adding useless variables. If adjusted R² drops when you add a feature, that feature is noise — remove it.

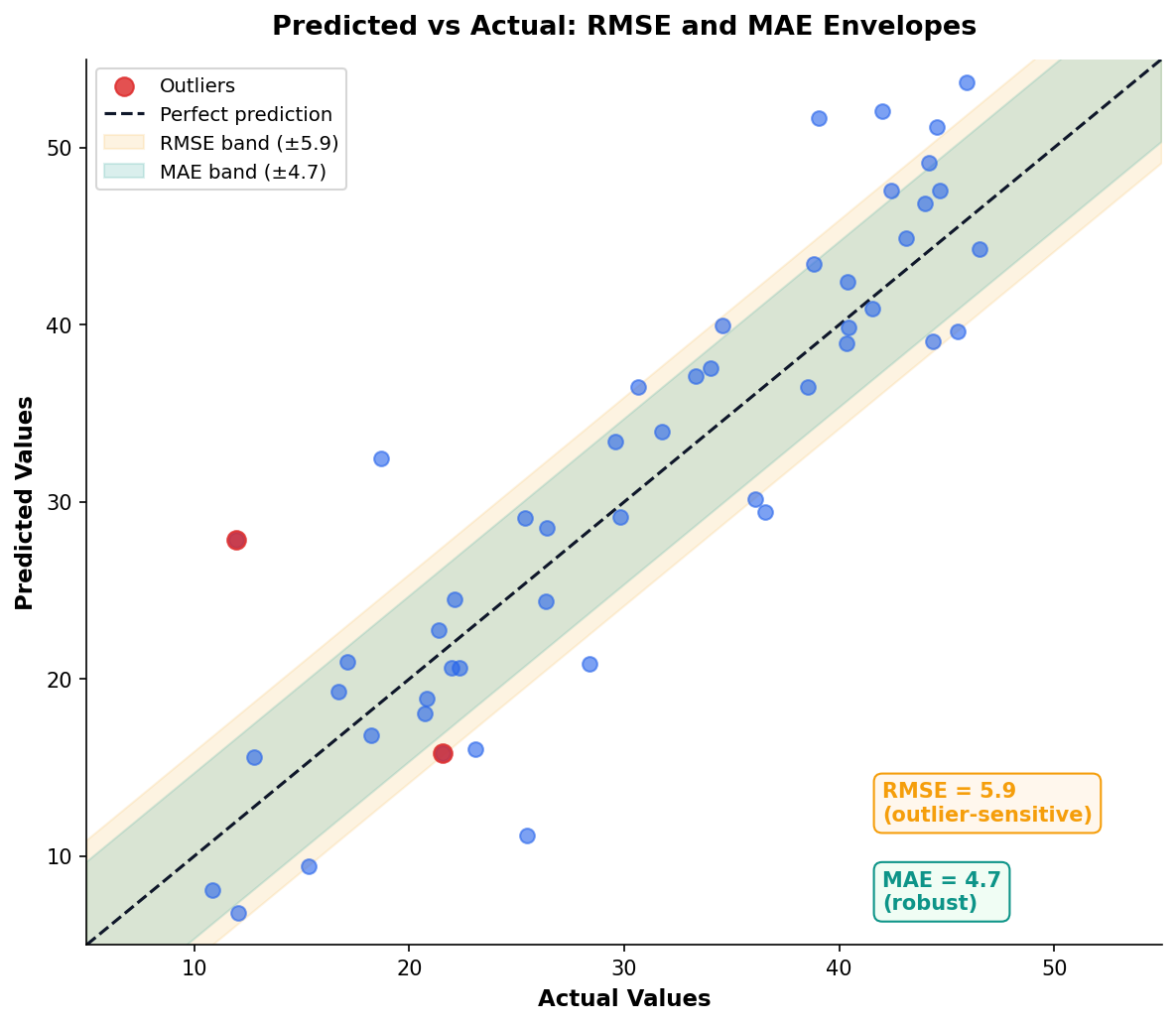

RMSE and MAE: Error in Units You Care About

Both measure prediction error in the same units as your outcome variable. RMSE penalizes large errors more heavily; MAE treats all errors equally.

| Metric | Strength | Weakness |

|---|---|---|

| RMSE | Sensitive to outliers | Penalizes big misses |

| MAE | Robust to outliers | Ignores error magnitude |

Practical Rule

Report both. If RMSE >> MAE, you have outlier predictions that need investigation.

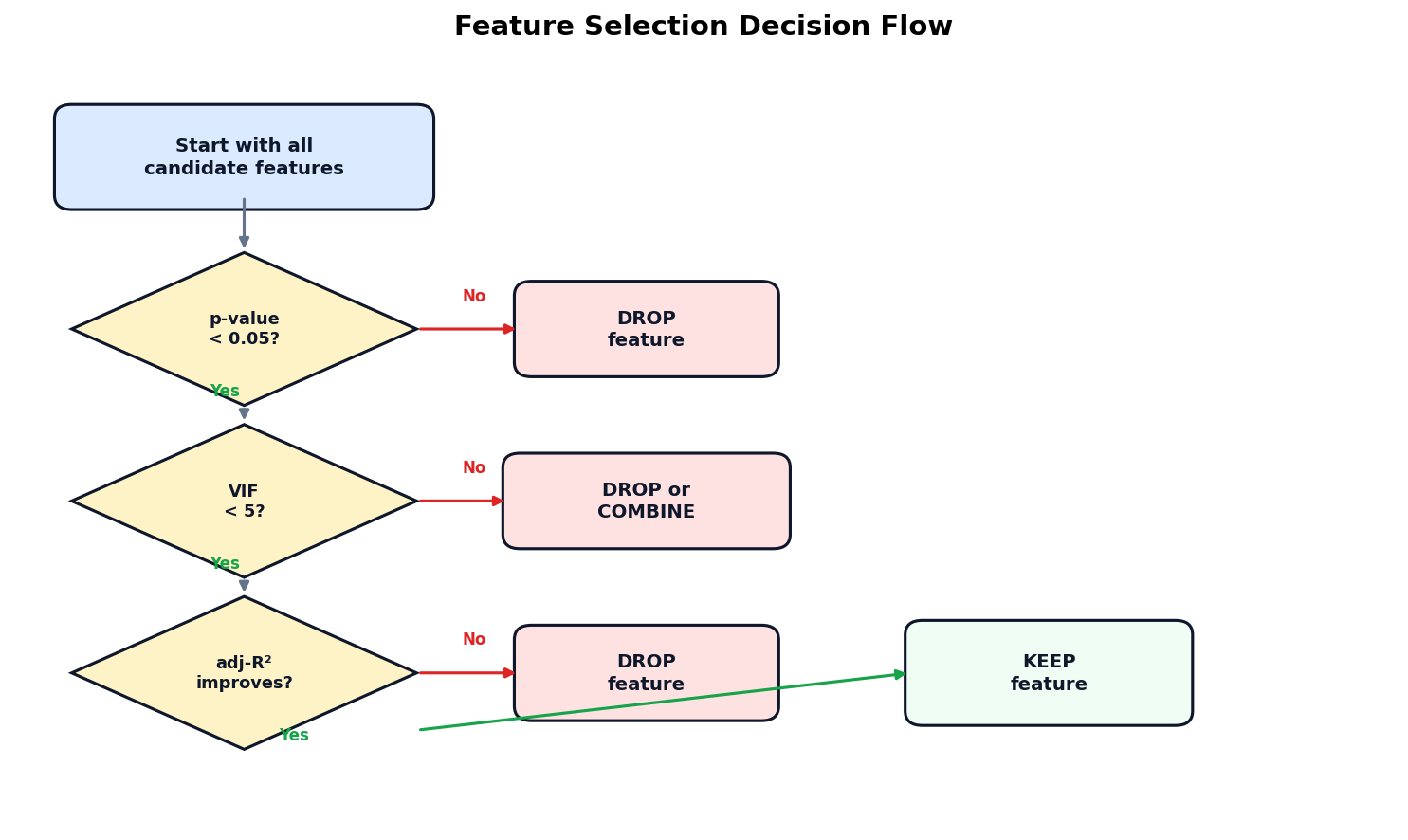

Feature Selection: Which Predictors Earn Their Place?

Stepwise Caution

Automated stepwise selection can overfit — it optimizes p-values, not real-world relevance. Always start with domain knowledge.

Practical Rule

If dropping a variable barely changes adjusted R², drop it. Simpler models are easier to explain to stakeholders.

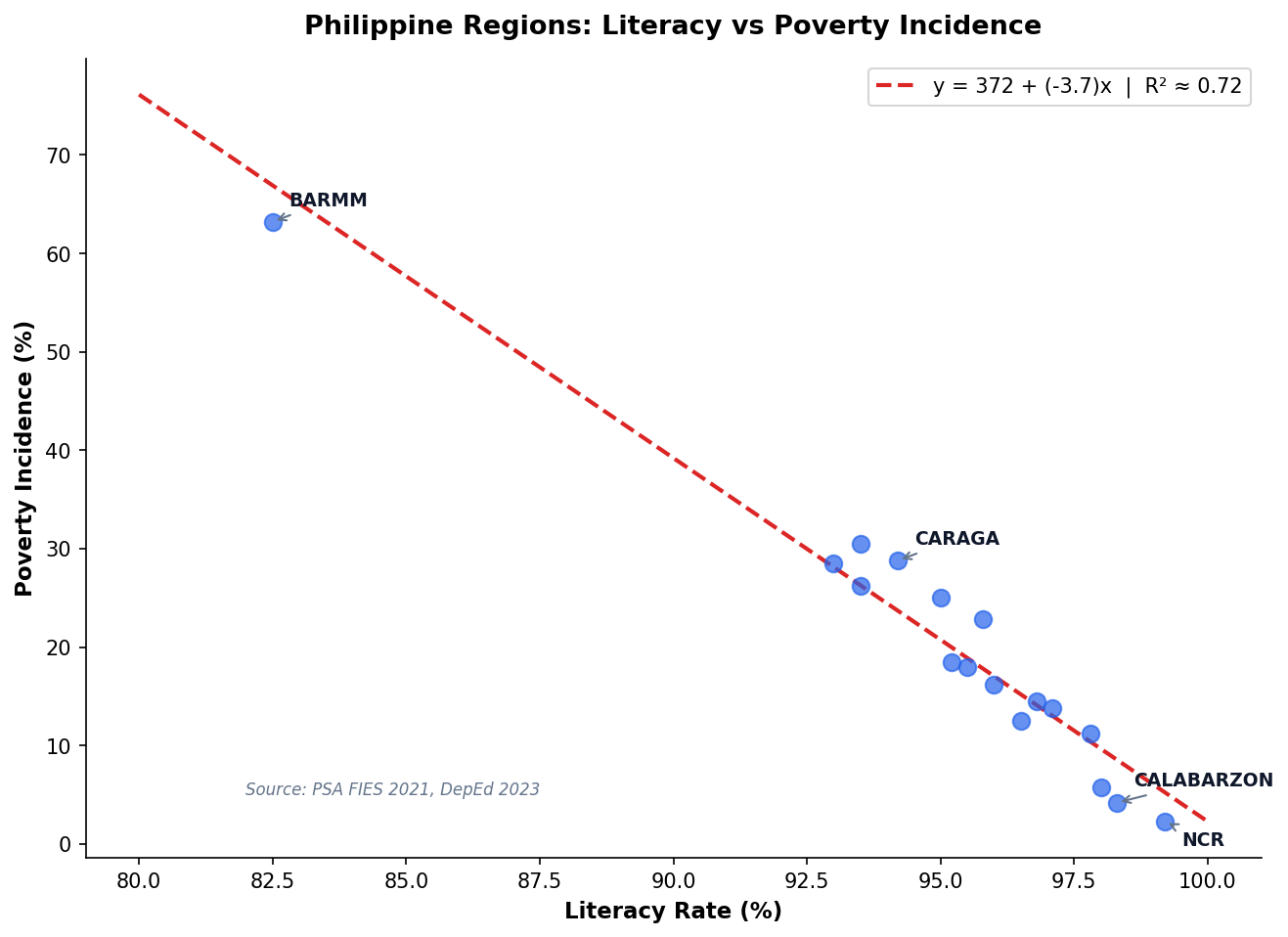

Philippine Regional Poverty: A Regression Case Study

Data Sources

PSA Family Income and Expenditure Survey 2021, DepEd Regional Statistics 2023. N = 17 regions.

What the Model Shows

Every 1 percentage-point increase in literacy is associated with a 2.8 pp decrease in poverty incidence (R² ≈ 0.72).

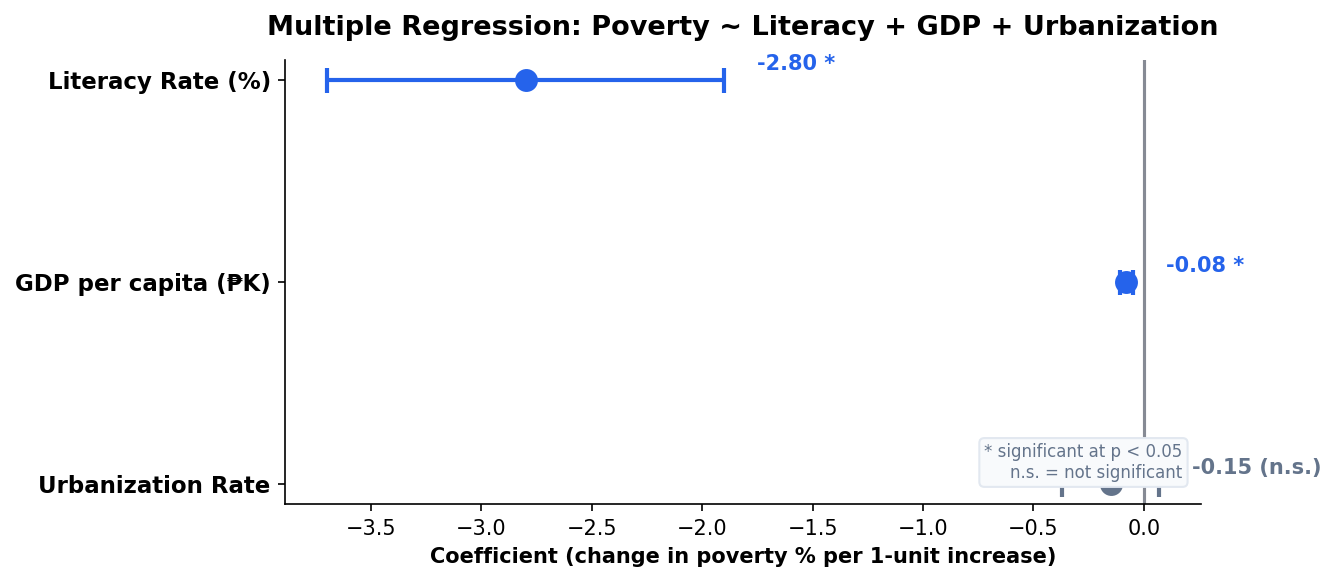

Multiple Predictors Paint a Richer Picture

Adding GDP per capita and urbanization rate to the literacy model:

| Predictor | Coeff. | Note |

|---|---|---|

| Literacy Rate | −2.80* | Strongest predictor |

| GDP per capita | −0.08* | Significant but small effect |

| Urbanization | −0.15 | Not significant after controlling for GDP |

Key Insight

Urbanization becomes non-significant once GDP is in the model — suggesting urbanization’s effect on poverty operates through economic output, not independently.

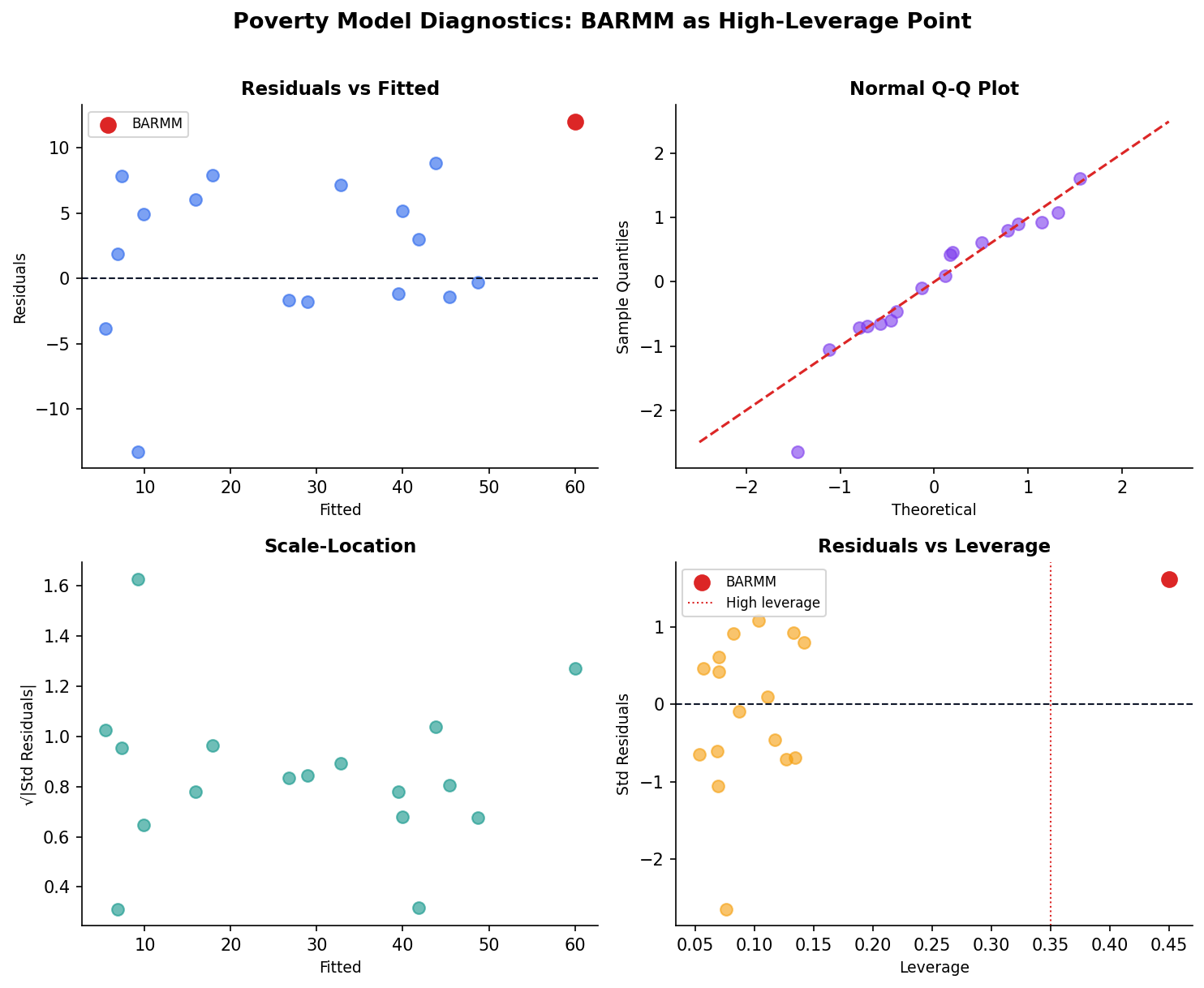

Diagnostics for the Poverty Model

What We See

Generally clean residuals for 15 of 17 regions. BARMM appears as a high-leverage point — its poverty rate (63%) is a structural outlier driven by conflict, not just economic factors.

Decision

Model is reasonable for most regions. BARMM and NCR are structural outliers — report them separately rather than letting them distort the model.

Three Regression Pitfalls to Avoid

Overfitting

Too many predictors for your sample size. Rule of thumb: need 10–15 observations per predictor. With 17 Philippine regions, limit to 2–3 predictors.

"I added 8 predictors to a 17-row dataset and got R² = 0.99!"

Extrapolation

The model predicts well within the data range. Outside it, predictions are meaningless. Don’t predict poverty for a region with 70% literacy if your data only goes down to 82%.

"Our model says poverty would be −5% if literacy reached 100%."

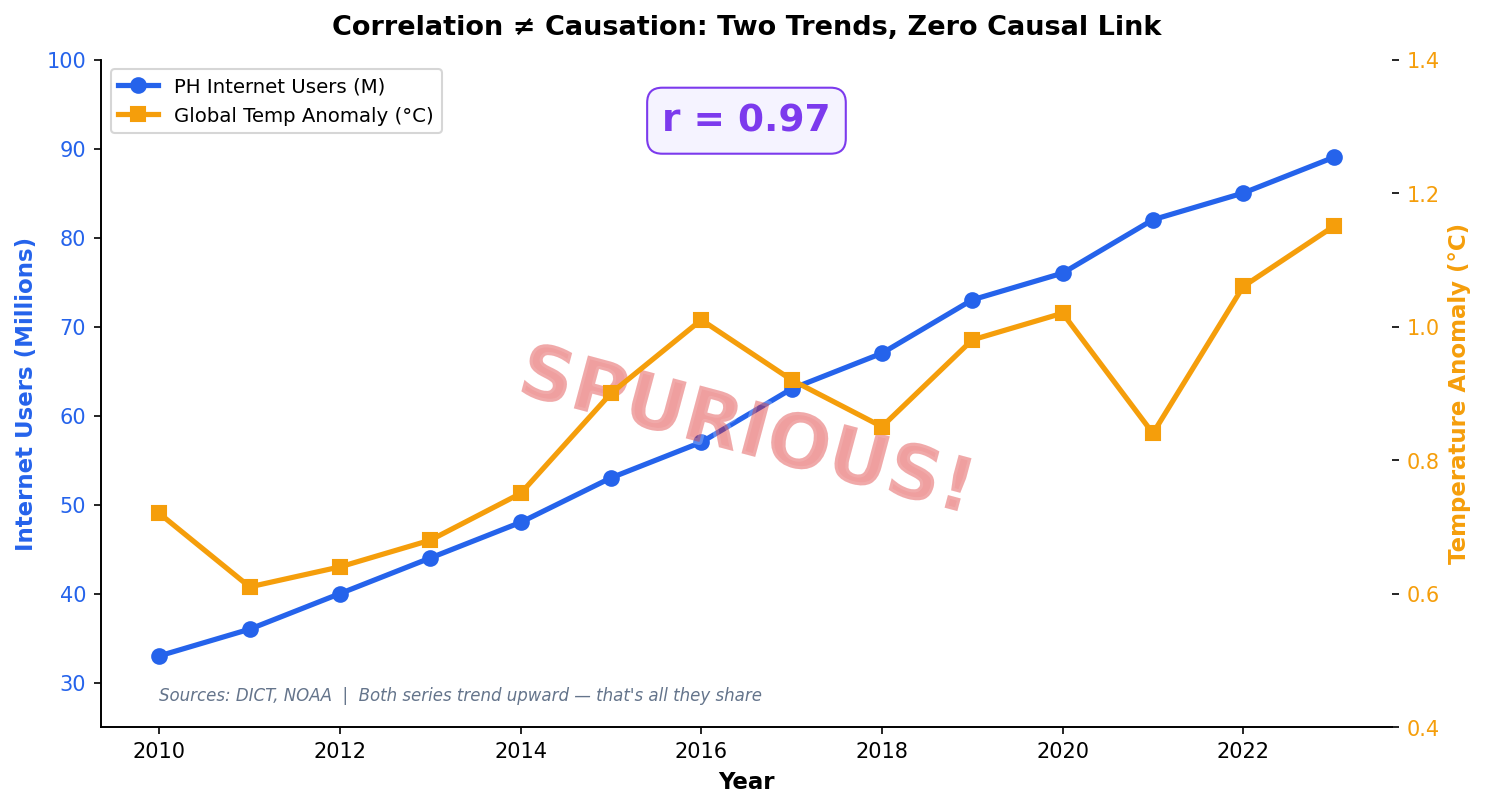

Correlation ≠ Causation

Regression coefficients show association, not causation. Ice cream sales and drowning both rise in summer — regression would show a “significant” relationship.

"The data proves that ice cream causes drowning."

All three pitfalls share a root cause: forgetting the limitations of the model and over-interpreting the output.

The Analyst’s Rule

Always state what the model cannot tell you alongside what it can. This builds credibility with stakeholders.

Correlation Does Not Imply Causation

A regression coefficient tells you that X and Y move together after controlling for other variables. It does not tell you that X causes Y.

When Can We Claim Causation?

Randomized controlled trials, natural experiments, or instrumental variables. Observational regression alone — no matter how high the R² — cannot prove causation.

The Analyst’s Responsibility

Always use language like “associated with” or “predicts,” never “causes” or “leads to,” when reporting regression results from observational data.

Session 1 Key Takeaways

- Regression for analytics = interpretation first, prediction second.

- Every coefficient is a “holding all else constant” statement — translate it for stakeholders.

- Check LINE assumptions visually with residual plots before trusting any p-value.

- VIF > 10 means your coefficients are unreliable — drop or combine correlated predictors.

- The Philippine poverty model shows literacy, not urbanization, as the stronger lever.

Next: Session 2 — Logistic Regression & Classification

Logistic Regression:

Predicting Yes-or-No

Outcomes

From probabilities to decisions

Department of Computer Science

University of the Philippines Cebu

"Behind every automated approval is a probability and a threshold."

GCash approves or rejects 2 million loan applications per month.

Behind every decision is a logistic regression model trained on borrower features.

2M

decisions/month

0.5 sec

per decision

₱0

human review for standard cases

Session 2 Objectives

Logistic Fundamentals

The sigmoid function, log-odds, and how to interpret odds ratios for stakeholders.

Classification Metrics

Confusion matrix, precision, recall, F1, and ROC-AUC — and when each matters most.

Decision-Making

Threshold tuning, cost-benefit analysis, and Philippine case studies in credit and education.

By the end of this session you will be able to build, evaluate, and explain a classification model for binary outcomes.

Builds on: Session 1 Linear Regression

From Linear to Logistic

When the outcome is yes or no, linear regression breaks. The sigmoid function fixes it — and odds ratios make it interpretable.

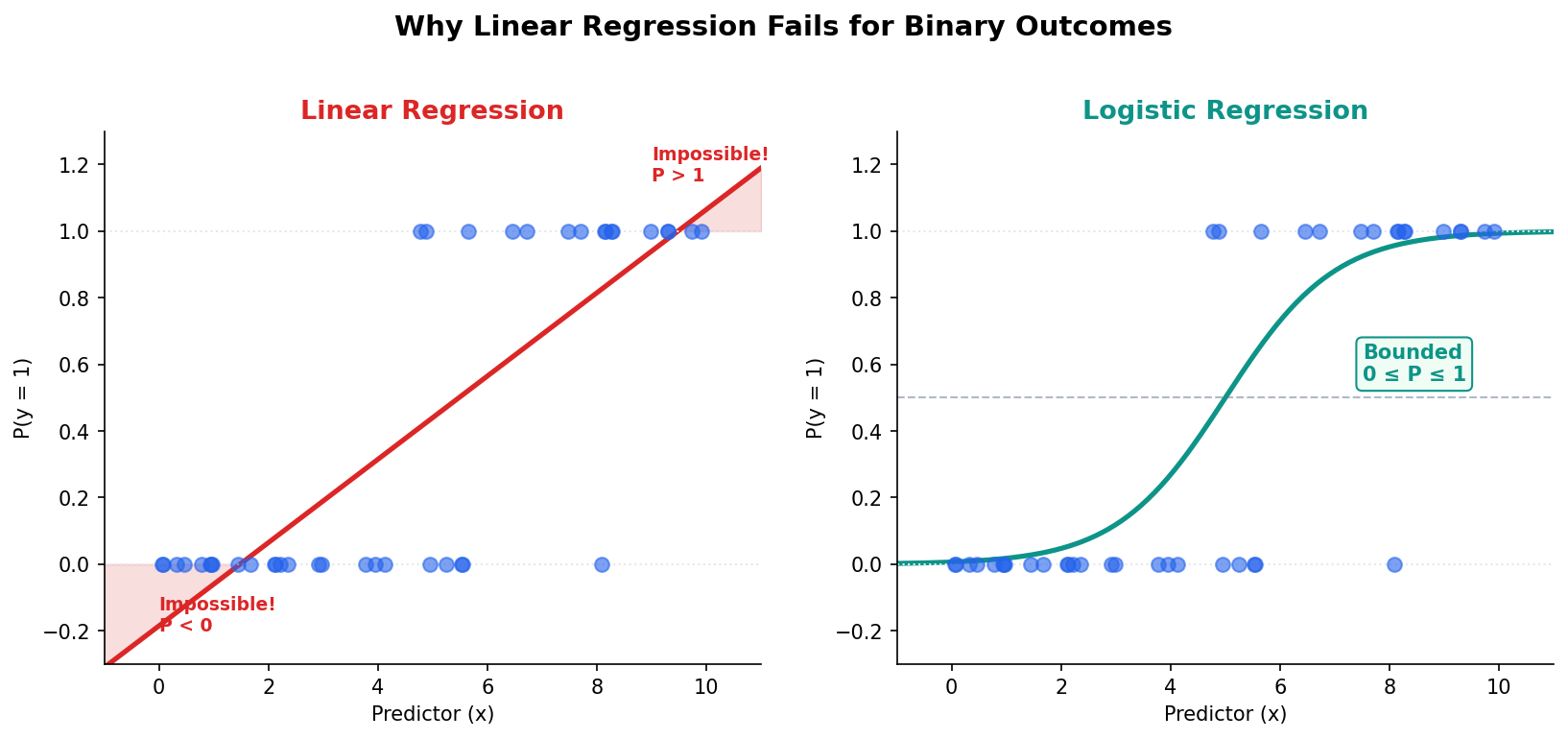

Linear Regression Cannot Predict Probabilities

The Problem

Linear regression predicts −0.2 or 1.3 for a probability — mathematically impossible. The prediction is unbounded.

The Fix

Wrap the linear prediction in a sigmoid function. Output is always between 0 and 1 — a valid probability.

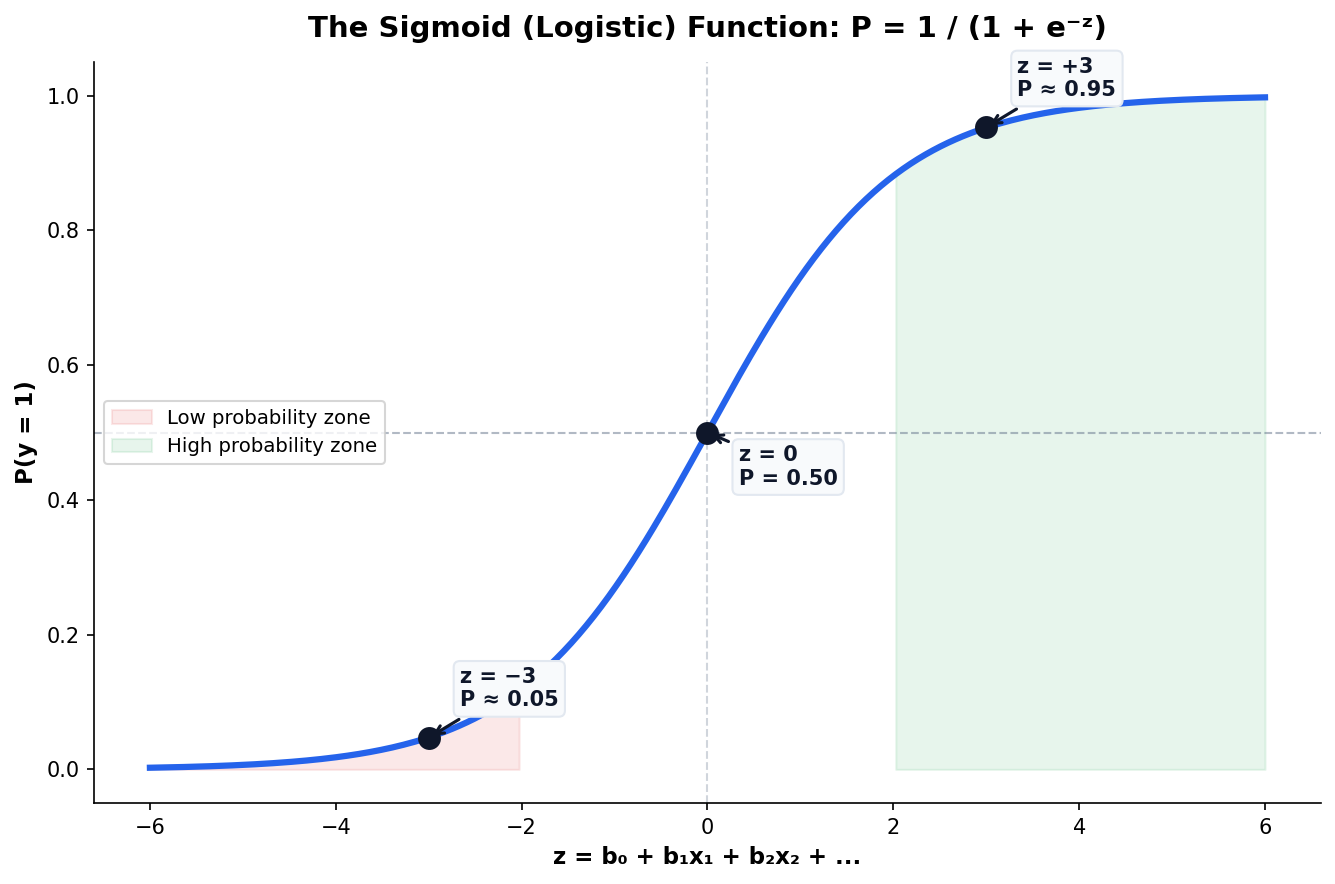

The Sigmoid Curve Maps Any Score to a Probability

The logistic function transforms any real-valued score z into a probability P between 0 and 1. The S-shape ensures smooth transitions near the decision boundary.

Why Sigmoid?

Natural for binary outcomes: as evidence increases, probability approaches 1 asymptotically but never exceeds it.

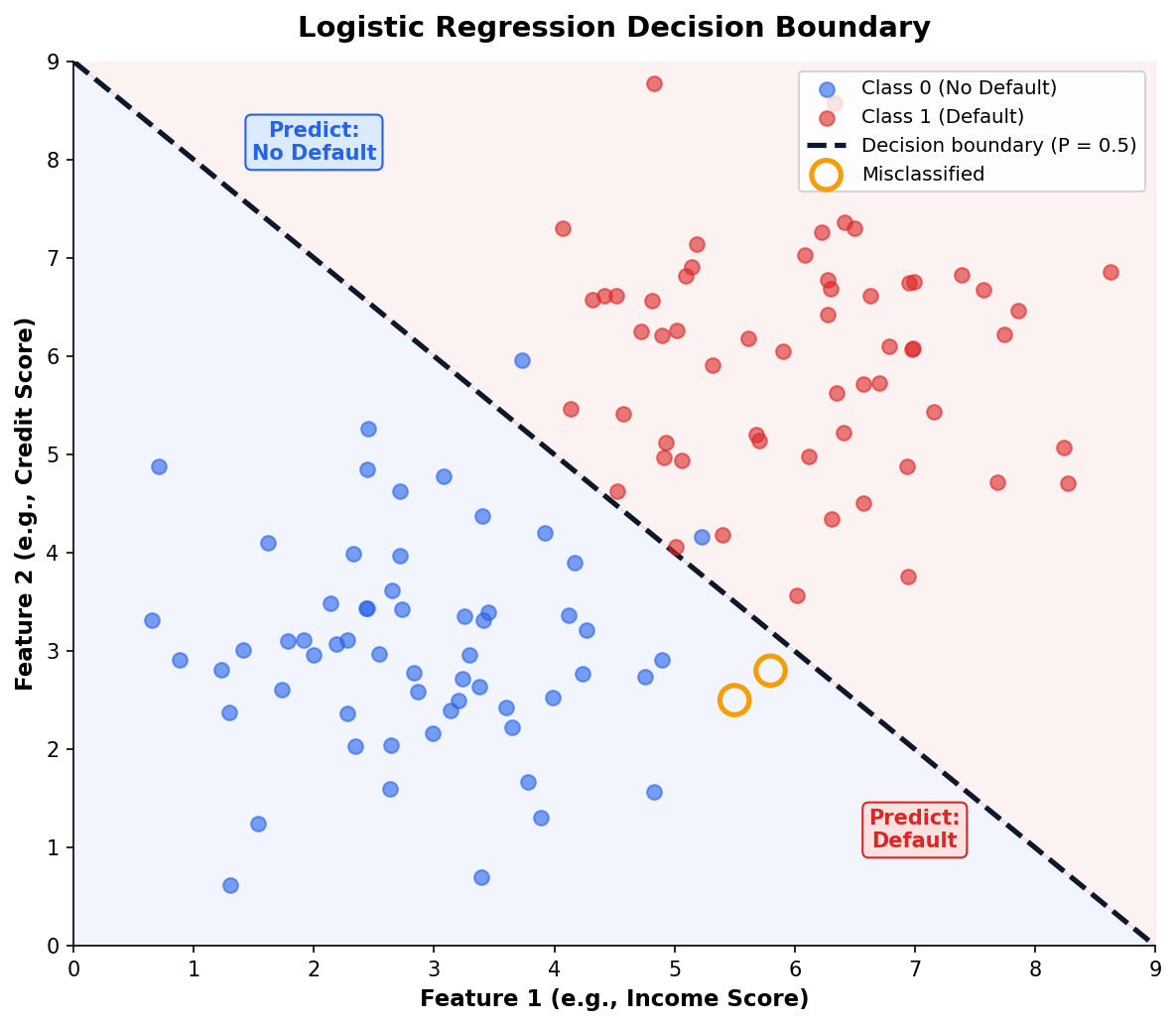

The Decision Boundary

Where P crosses 0.5 is the default classification threshold — but it’s rarely the optimal one for real problems.

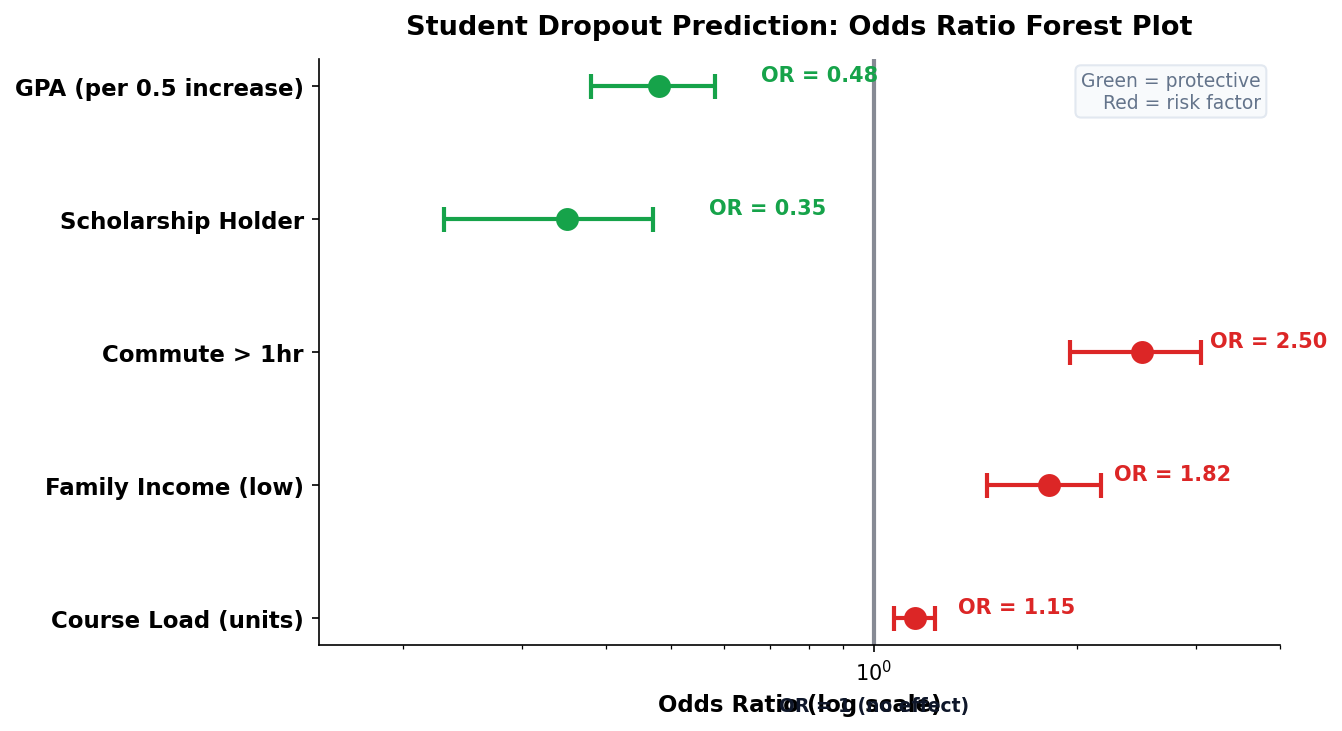

Odds Ratios Make Coefficients Interpretable

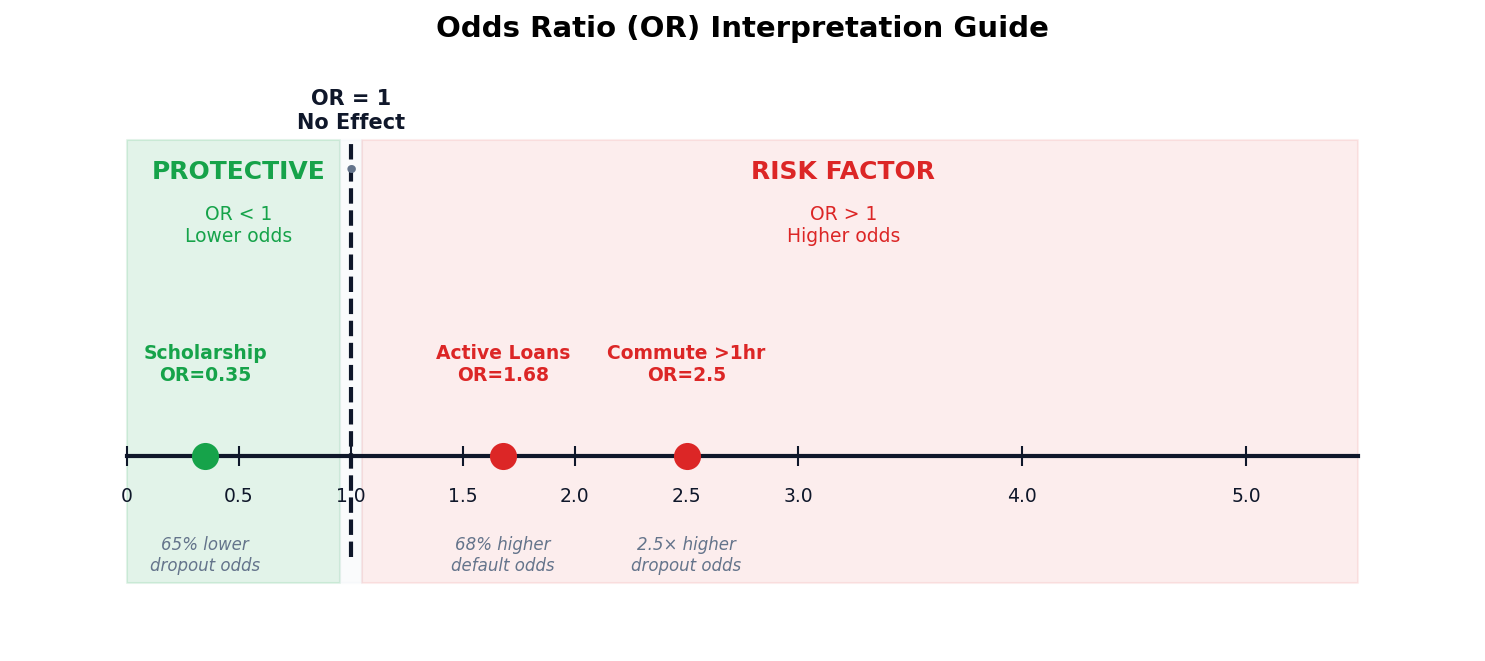

In logistic regression, we exponentiate the coefficient (e𝗛) to get the odds ratio. This tells stakeholders how much the odds change per unit increase in the predictor.

How to Read Odds Ratios

OR = 1: no effect. OR > 1: increases odds. OR < 1: decreases odds. OR = 2.5 means the odds are 2.5× higher for a 1-unit increase.

Example

A scholarship holder has OR = 0.35 for dropout — meaning 65% lower odds of dropping out compared to non-scholarship students.

Decision Boundaries Separate the Outcomes

The Default Threshold is 0.5

But it’s rarely optimal. If false negatives are costly (missing fraud, missing disease), lower the threshold to catch more positives.

Moving the Boundary

Lowering the threshold catches more true positives but also more false positives. The business context determines the right balance.

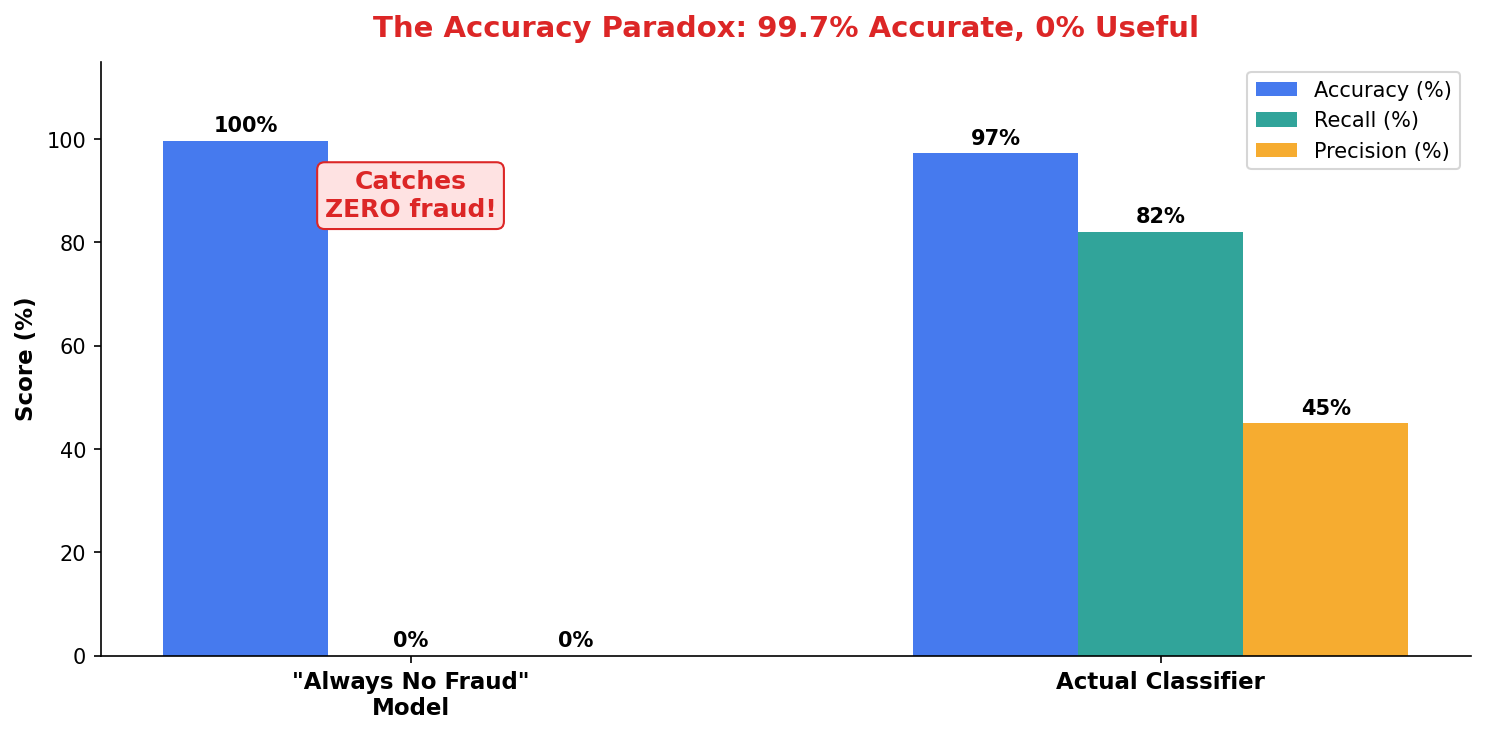

Measuring Classification Quality

Accuracy is a lie in imbalanced data. Precision, recall, and AUC tell the real story.

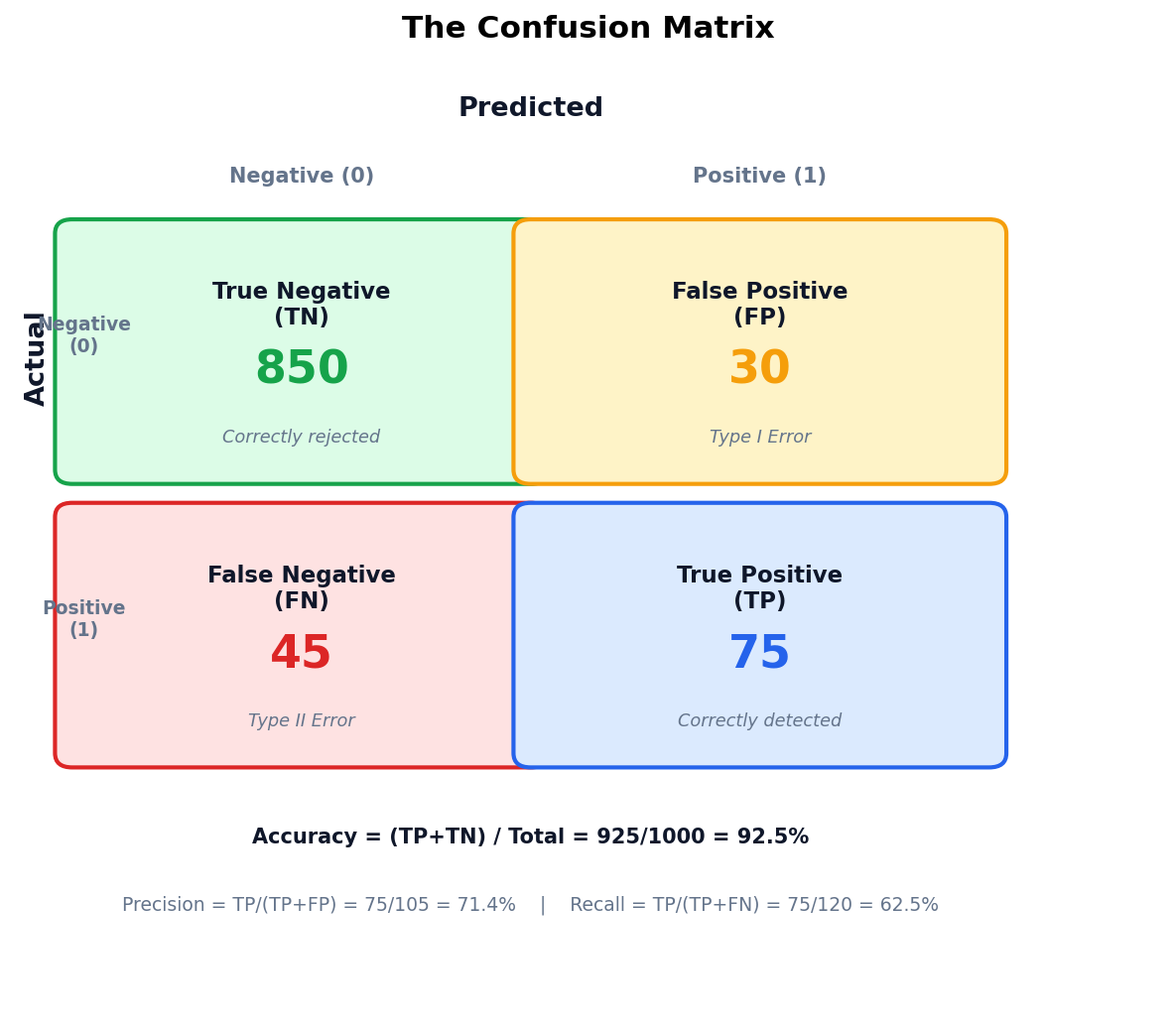

The Confusion Matrix: Every Classifier’s Report Card

Type I Error (False Positive)

Flagging a good borrower as default risk — costs the lender a customer and reputation.

Type II Error (False Negative)

Missing an actual default — the costly one. The lender loses the principal.

Accuracy Misleads When Classes Are Imbalanced

The Accuracy Paradox

A model that predicts “no fraud” for every transaction achieves 99.7% accuracy — but catches zero fraud. Useless.

Philippine Context

BSP reports credit card fraud at ~0.3% of transactions. Any accuracy metric above 99% is meaningless without checking recall.

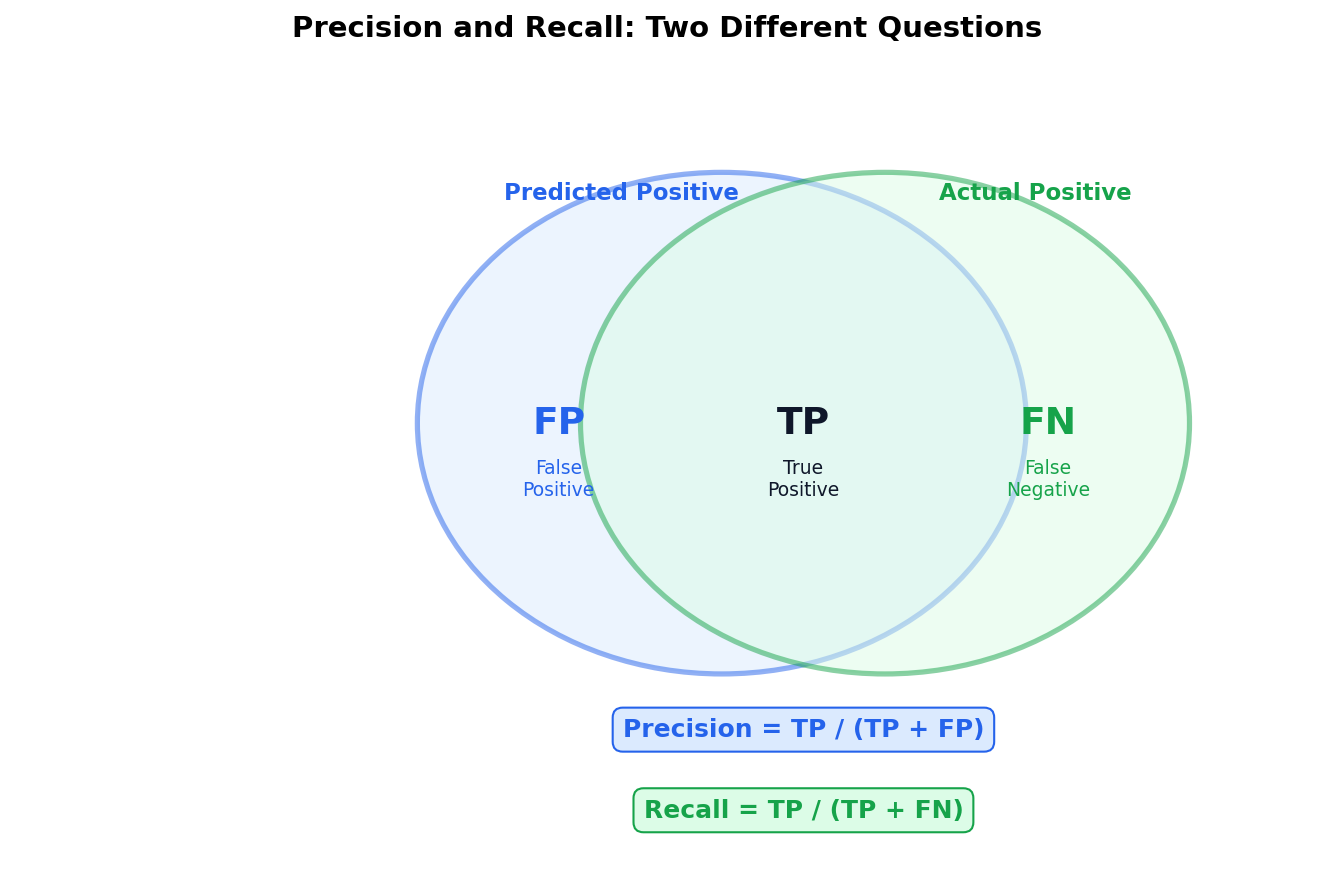

Precision and Recall Answer Different Questions

Precision asks: “Of everything I flagged, how much was correct?” Recall asks: “Of everything that was positive, how much did I catch?”

Spam filter — don’t block legitimate email. Cost of FP > cost of FN.

Disease screening — don’t miss sick patients. Cost of FN > cost of FP.

F1 Score

Harmonic mean of precision and recall. Use when you need to balance both and can’t afford to optimize one at the expense of the other.

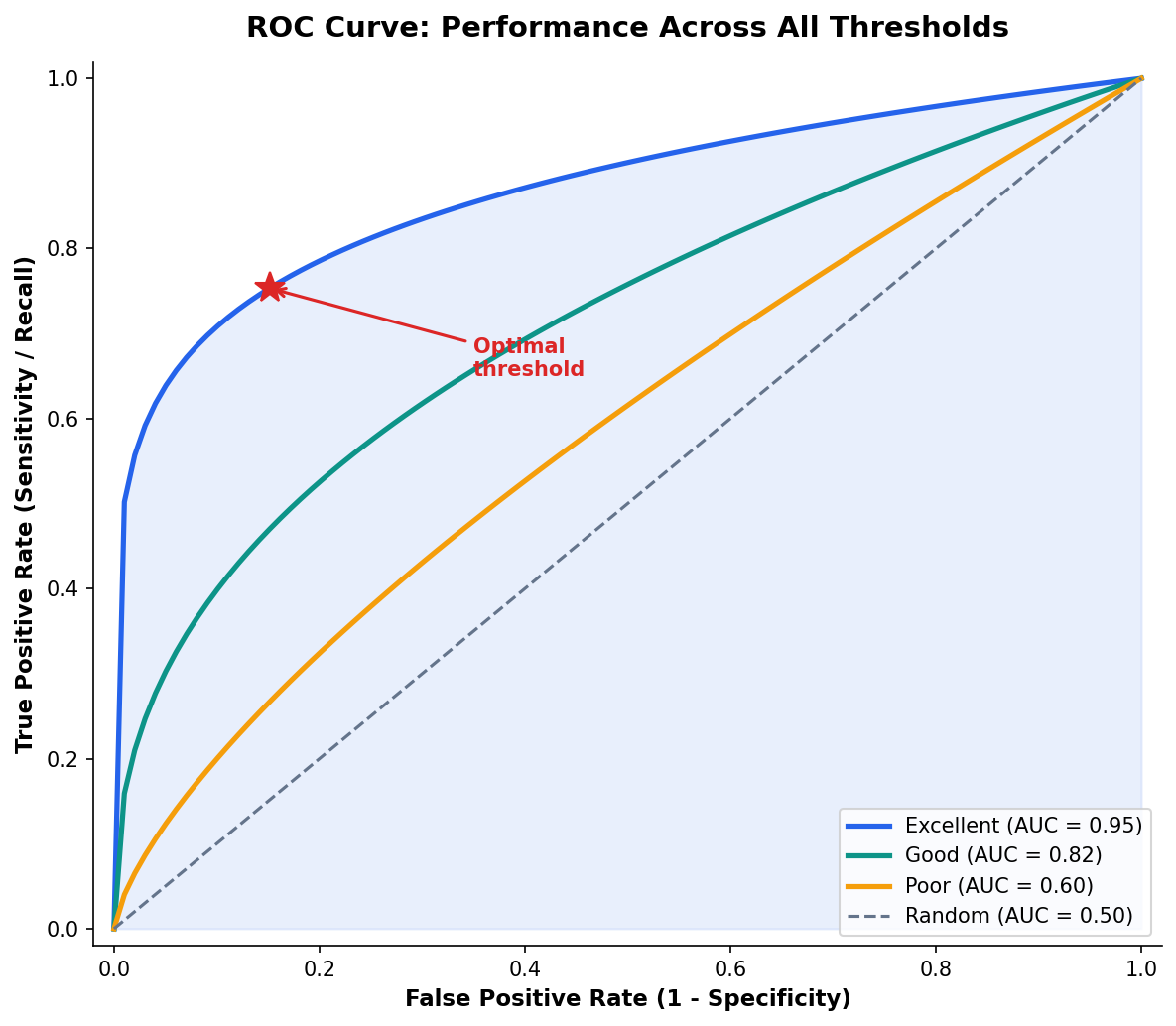

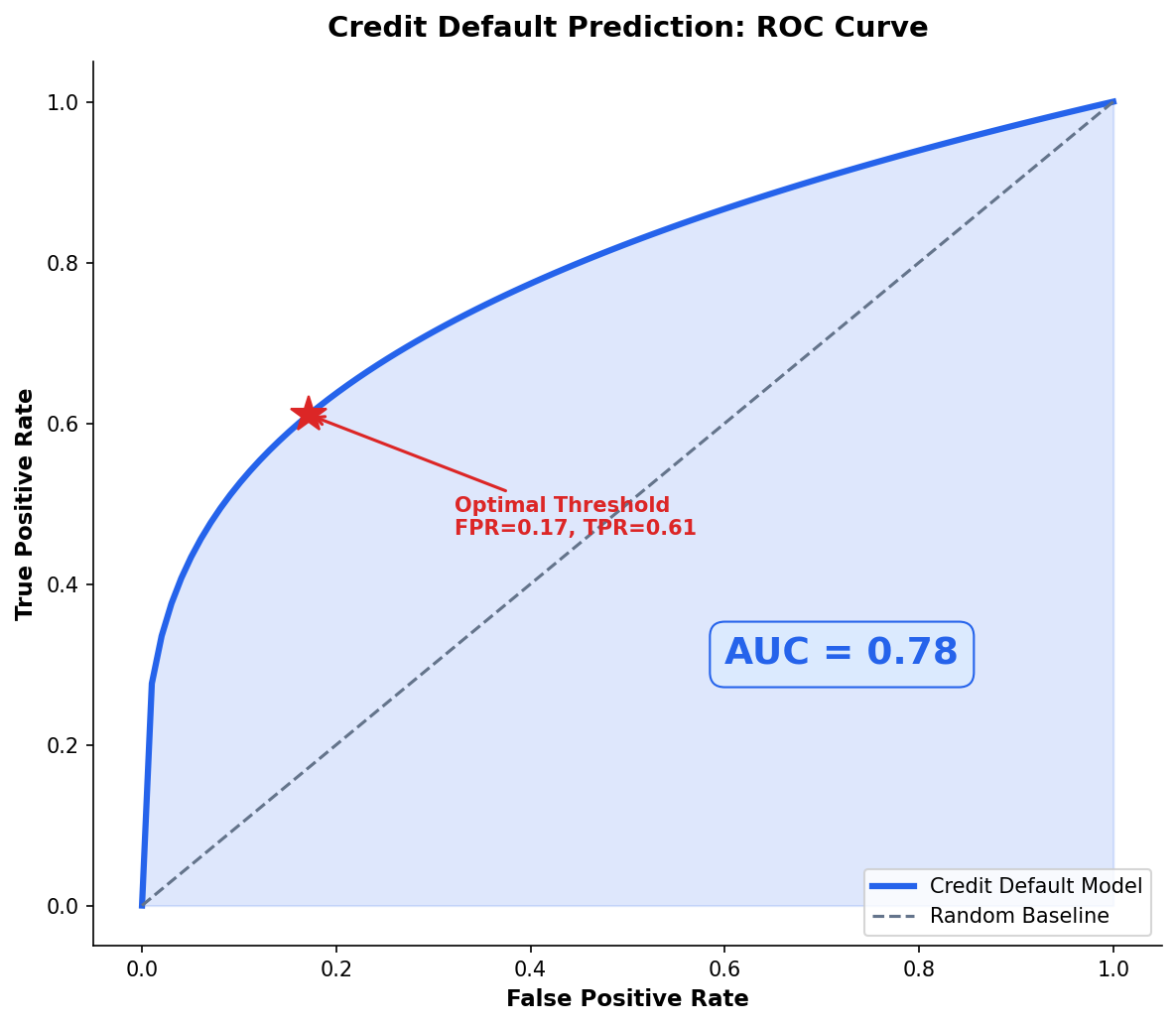

The ROC Curve Summarizes Performance Across All Thresholds

AUC Interpretation

0.5 = coin flip. 0.7–0.8 = fair. 0.8–0.9 = good. 0.9+ = excellent. Always compare to a baseline model, not to perfection.

When to Use ROC vs PR Curve

ROC for balanced datasets. Precision-Recall curve for imbalanced data — it won’t hide poor performance on the minority class.

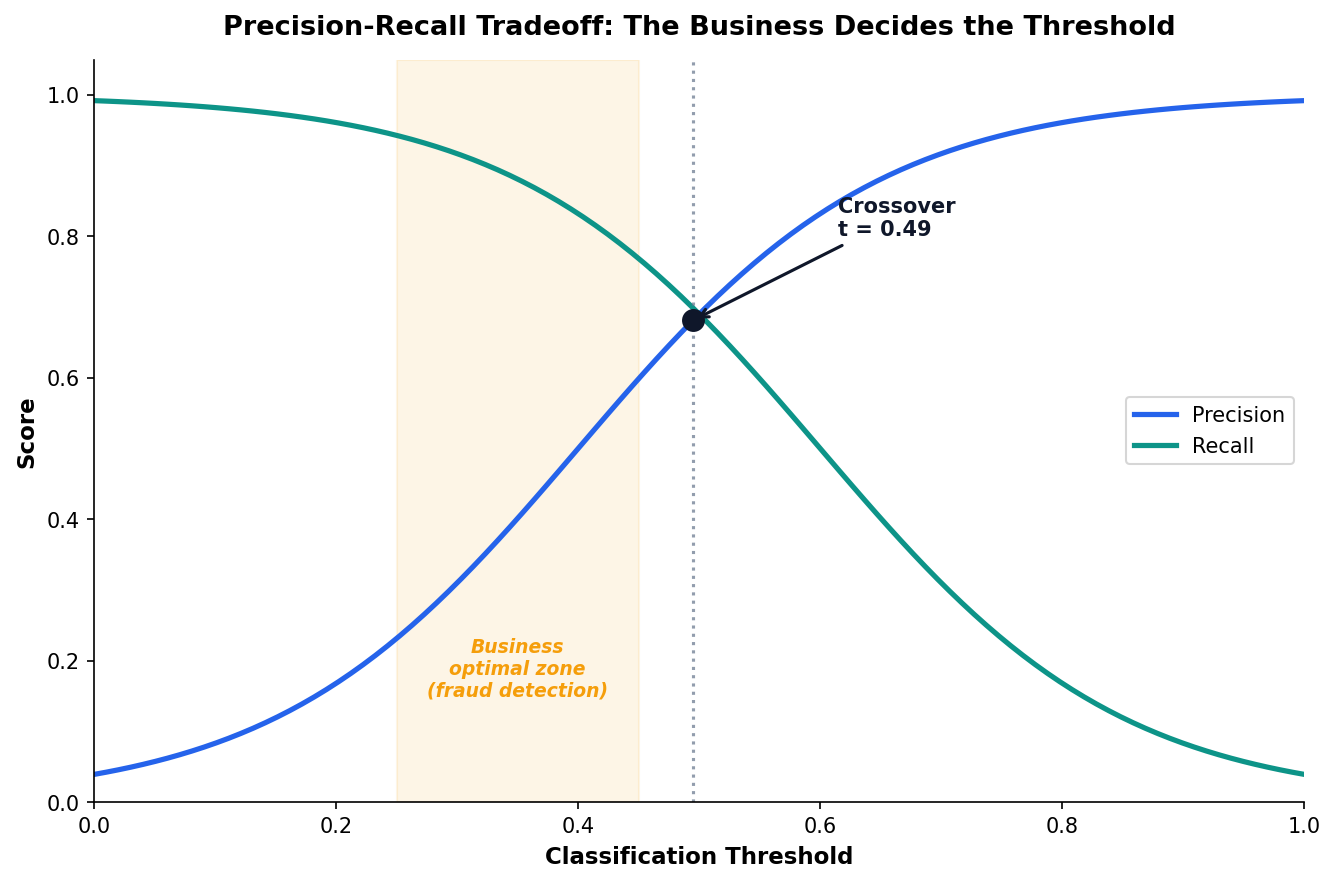

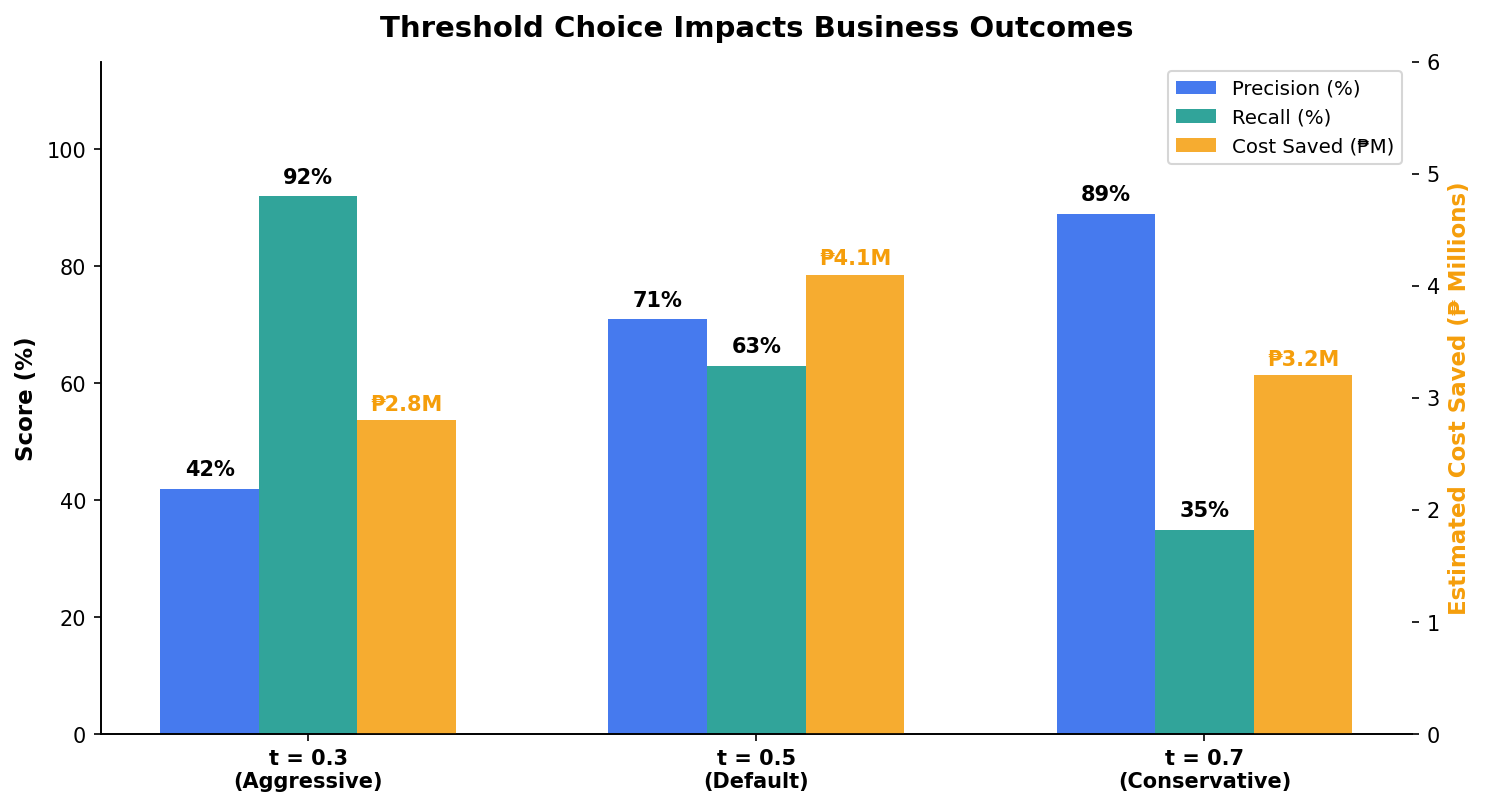

Threshold Tuning: The Business Decides, Not the Algorithm

Cost of Errors

The optimal threshold depends on the relative cost of false positives vs false negatives. This is a business decision, not a statistical one.

Philippine Example

For student loan defaults, missing a default costs ₱50K+. Over-flagging costs a phone call. Set the threshold lower to maximize recall.

Philippine Applications & What Comes Next

From credit scoring to student retention — logistic regression powers decisions across Philippine institutions.

Predicting Student Dropout: A UP Cebu Scenario

Data Source

Simulated based on CHED enrollment statistics and UP System retention rates. N = 2,000 students.

Key Findings

Scholarship holders have 65% lower dropout odds. Every 30-min commute increase raises odds by 40%. GPA is the single strongest predictor.

Credit Default Prediction for Philippine Lenders

BSP-regulated consumer lending (GCash GLoan, Bayad Center) requires explainable models per BSP Circular 855.

| Feature | OR | Interpretation |

|---|---|---|

| Monthly Income | 0.62 | Higher income → lower default odds |

| Outstanding Balance | 1.45 | Higher balance → higher risk |

| Employment Length | 0.78 | Longer tenure → lower risk |

| Active Loans | 1.68 | More loans → 68% higher default odds |

Regulatory Context

BSP requires that lending models be explainable to regulators and consumers. Logistic regression’s odds ratios satisfy this requirement directly.

From Odds Ratios to Actionable Recommendations

The analyst’s job: translate OR = 1.68 into “every additional active loan increases default risk by 68%.”

Decision Matrix

Low risk (P < 0.3): auto-approve. Medium (0.3–0.7): manual review. High (P > 0.7): auto-reject. Thresholds set by business, not by data science.

The Deliverable

A stakeholder-ready table mapping model output to business actions — not a confusion matrix.

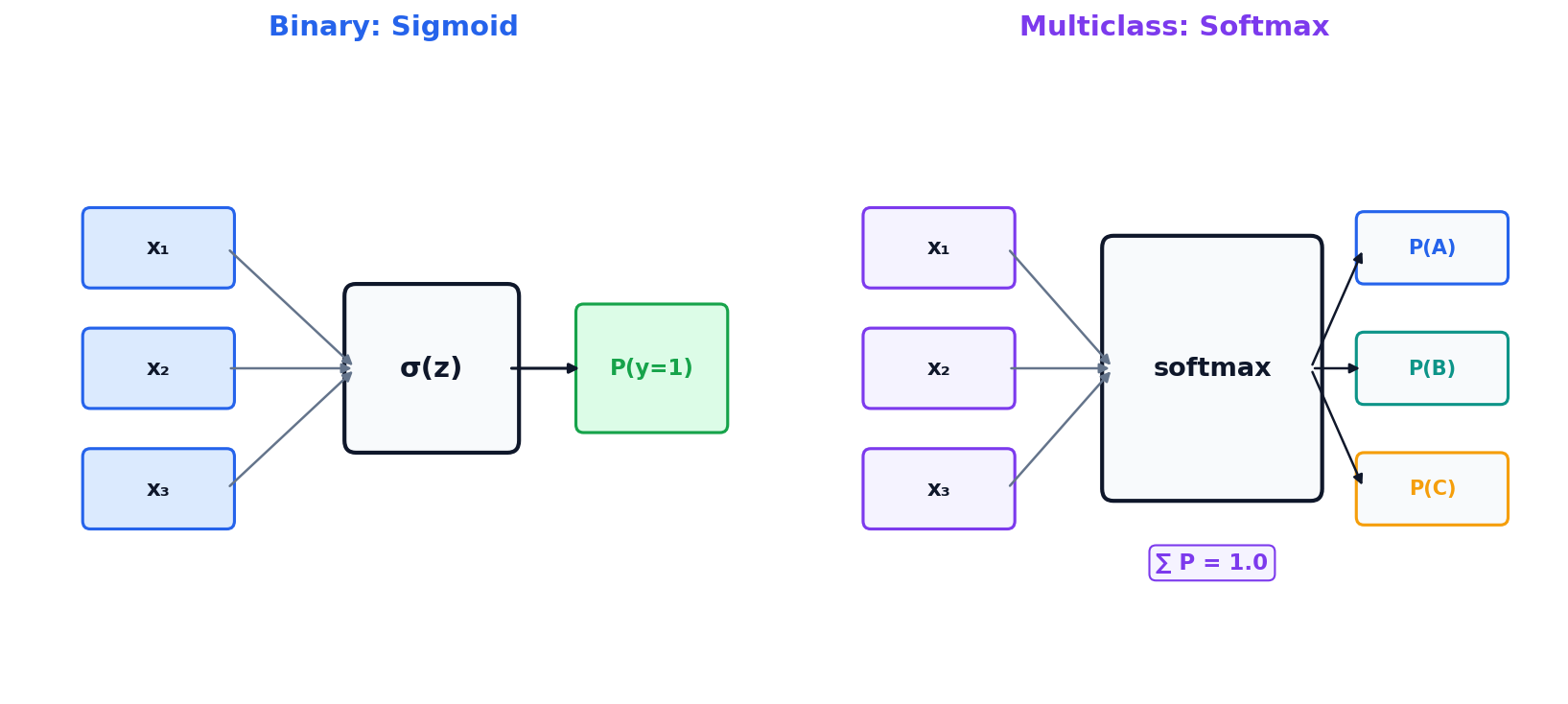

Multiclass Extension: Softmax Regression

When the outcome has more than two categories (e.g., customer segment A/B/C), the sigmoid extends to softmax — one probability per class, summing to 1.

Brief Mention

If you have 3+ classes, use softmax. For most analytics use cases, binary logistic regression covers the majority of decision problems.

Week 8 Preview

Decision trees handle multiclass naturally and don’t require the linearity assumption. Next week we explore when trees beat regression.

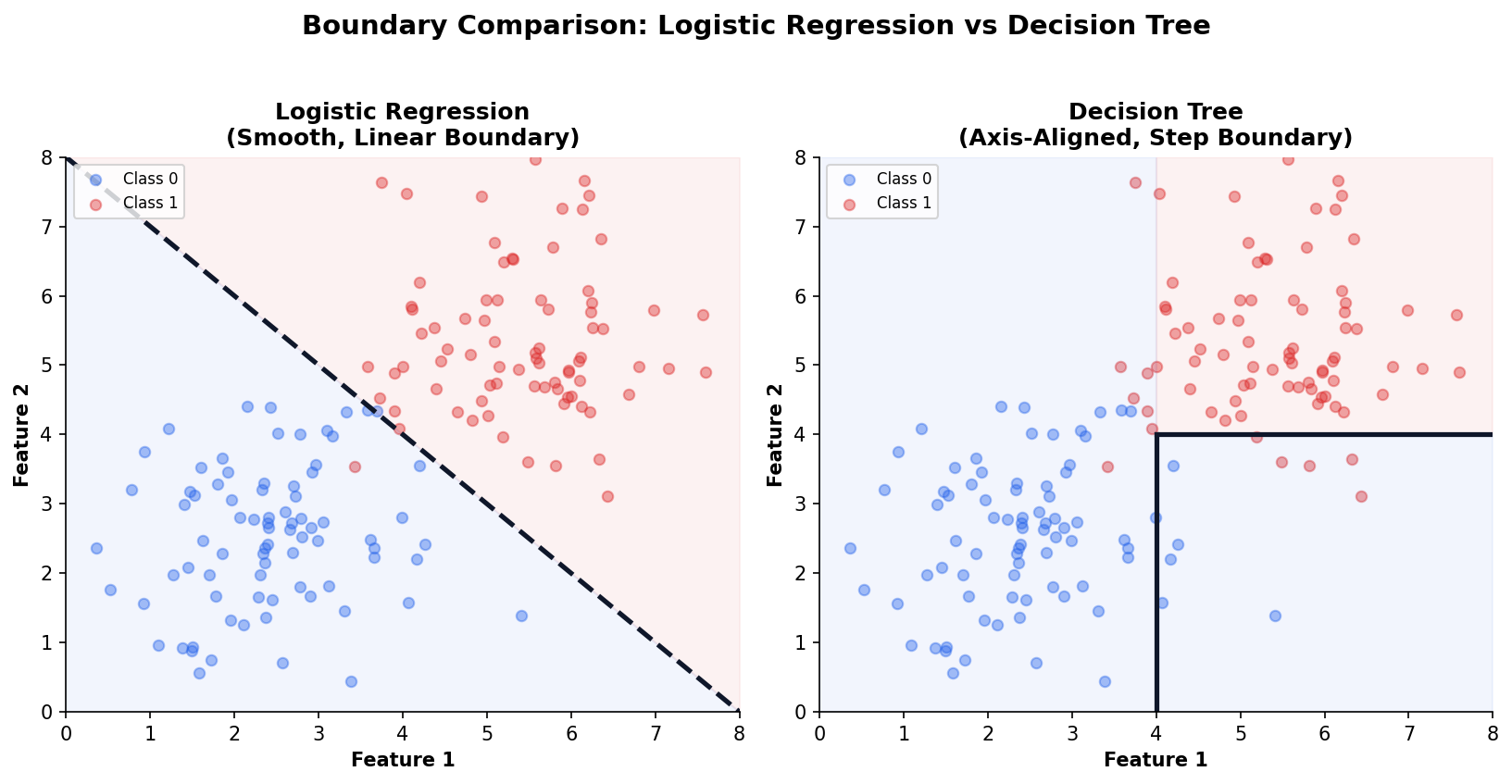

Logistic Regression vs Decision Trees: When to Use Which

Both can classify. The choice depends on your data and your audience.

| Criterion | Logistic Regression | Decision Tree |

|---|---|---|

| Interpretability | Coefficients + OR | If-then rules |

| Feature Types | Numeric (needs encoding) | Numeric + categorical natively |

| Linearity | Assumes linear log-odds | No linearity assumption |

| Interactions | Must add manually | Discovers automatically |

| Speed | Very fast | Fast (slower for ensembles) |

Preview

Next week we explore decision trees, random forests, and gradient boosting — and when they outperform logistic regression.

Three Logistic Regression Mistakes to Avoid

Using Accuracy on Imbalanced Data

Always check class balance first. If 99% of cases are negative, accuracy is useless. Use F1, AUC, or precision-recall instead.

"Our fraud model has 99.7% accuracy!" — it predicts “no fraud” for everything.

Ignoring Odds Ratio Direction

OR = 0.5 means protective (50% lower odds), not harmful. Misreading direction leads to exactly wrong recommendations.

"OR = 0.35, so scholarships increase dropout risk!" — it’s the opposite.

One Threshold for All

Different business contexts require different thresholds. Fraud detection (low threshold) ≠ churn prediction (balanced) ≠ medical screening (very low).

"We use 0.5 for everything." — one size never fits all.

Each mistake leads to actionable harm: wrong metrics mislead leadership, wrong direction inverts policy, and wrong thresholds cost money.

Prevention

Always: (1) check class balance, (2) verify OR direction with domain logic, (3) tune threshold to business cost structure.

Session 2 Key Takeaways

- Logistic regression predicts probabilities for binary (yes/no) outcomes — bounded between 0 and 1.

- Odds ratios (e𝗛) make coefficients interpretable for stakeholders — no math degree required.

- Accuracy lies in imbalanced data — always check precision, recall, F1, and AUC.

- Threshold choice is a business decision, not a statistical one — align it with the cost of errors.

- Decision trees (Week 8) handle non-linear boundaries and multiclass outcomes naturally.

Next Week: Tree-Based Methods — Decision Trees, Random Forests, and Gradient Boosting

Week 8 Preview

Tree-Based Methods

Decision Trees — interpretable if-then rules

Random Forests — ensemble power

Gradient Boosting — state-of-the-art tabular performance

Lab 7: Build a Philippine credit scoring model, interpret odds ratios, and optimize the threshold for a business objective.